For those working within software architecture, the term “monolithic application” or “monolith” carries significant weight. This traditional application design approach has been a staple for software development for decades. Yet, as technology has evolved, the question arises: Do monolithic applications still hold their place in the modern development landscape? It’s a heated debate that has been a talking point for many organizations and architects looking at modernizing their software offerings.

This blog will explore the intricacies of monolithic applications and provide crucial insights for software architects and engineering teams. We’ll begin by understanding the fundamentals of monolithic architectures and how they function. Following this, we’ll explore microservice architectures, contrasting them with the monolithic paradigm.

What is a monolithic application?

In software engineering, a monolithic application embodies a unified design approach where an application’s functionality operates as a single, indivisible unit. This includes the user interface (UI), the business logic driving the application’s core operations, and the data access layer responsible for communicating with the database.

Let’s highlight the key characteristics of monolithic apps:

- Self-contained: Monolithic applications are designed to function independently, often minimizing the need for extensive reliance on external systems.

- Tightly Coupled: A monolith’s internal components are intricately interconnected. Modifications in one area can potentially have cascading effects across the entire application.

- Single Codebase: The application’s entire codebase is centralized, allowing for collaborative development within a single, shared environment.

A traditional e-commerce platform is an example of a monolithic application. The product catalog, shopping cart, payment processing, and order management features would all be inseparable components of the system. A single monolithic codebase was the norm in systems built before the push towards microservice architecture.

The monolithic approach offers particular advantages in its simplicity and potential for streamlined development. However, its tightly integrated nature can pose challenges as applications become complex. We’ll delve into the advantages and disadvantages in more detail later in the blog. Next, let’s shift our focus and understand how a monolithic application functions in practice.

How does a monolithic application work?

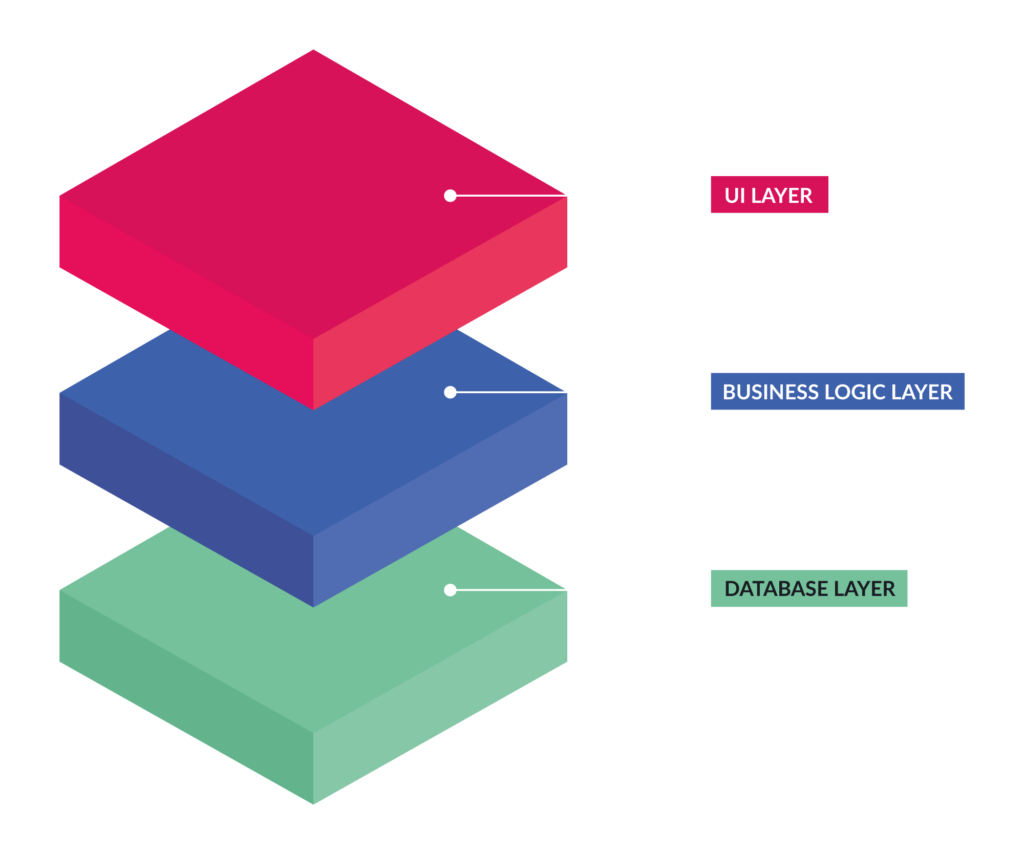

When understanding the inner workings of a monolithic application, it’s best to picture it as a multi-layered structure. However, depending on how the app is architected, the separation between layers might not be as cleanly separated in the code as we logically divide it conceptually. Within the monolith, each layer plays a vital role in processing user requests and delivering the desired functionality. Let’s take a look at the three distinct layers in more detail.

1. User interface (UI)

The user interface is the face of the application, the visual components with which the user interacts directly. This encompasses web pages, app screens, buttons, forms, and any element that enables the user to input information or navigate the application.

When users interact with an element on the UI, such as clicking a “Submit” button or filling out a form, their request is packaged, sent, and processed by the next layer – the application’s business logic.

2. Business logic

Think of the business logic layer as the brain of the monolithic application. It contains a complex set of rules, computations, and decision-making processes that define the software’s core functionality. Within the business logic, a few critical operations occur:

- Validating User Input: Ensuring data entered by the user conforms to the application’s requirements.

- Executing Calculations: Performing required computations based on user requests or provided data.

- Implementing Branching Logic: Making decisions that alter the application’s behavior according to specific conditions or input data.

- Coordinating with the Data Layer: The business logic layer often needs to send and receive information from the data access layer to fulfill a user request.

The last functionality discussed above, coordinating with the Data Layer, is crucial for almost all monoliths. For data to be persisted, interaction with the application’s data access layer is critical.

3. Data access layer

The data access layer is the gatekeeper to the application’s persistent data. It encapsulates the logic for interacting with the database or other data storage mechanisms. Responsibilities include:

- Retrieving Data: Fetching relevant information from the database as instructed by the business logic layer.

- Storing Data: Saving new information or updates to existing records within the database layer.

- Modifying Data: Executing changes to stored information as required by the application’s processes.

Much of the interaction with the data layer will include CRUD operations. This stands for Create, Read, Update, and Delete, the core operations that applications and users require when utilizing a database. Of course, in some older applications, business logic may also reside within stored procedures executed in the database. However, this is a pattern that most modern applications have moved away from.

The significance of deployment

In a monolithic architecture, the tight coupling of these layers has profound implications for deployment. Even a minor update to a single component could require rebuilding and redeploying the entire application as a single unit. This characteristic can hinder agility and increase deployment complexity – a pivotal factor to consider when evaluating monolithic designs, especially in large-scale applications. This leads to much more involved testing, potentially regression testing an entire application for a small change and a more stressful experience for those maintaining the application.

What is a microservice architecture?

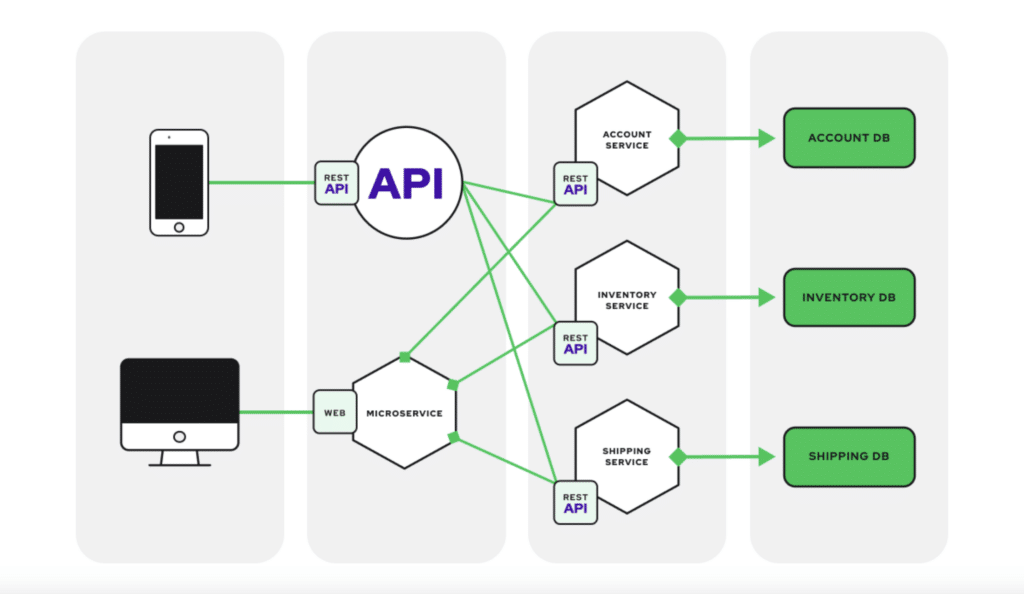

As applications have evolved and become more complex, the monolithic approach is only sometimes recognized as the optimal way to build and deploy applications. This is where the push for microservice architectures has swooped in to remedy the pain points of monoliths. The microservices architecture presents a fundamentally different way to structure software applications. Instead of building an application as a single, monolithic block, the microservices approach advocates for breaking the application down into multiple components. This results in small, independent, and highly specialized services.

Here are a few hallmarks and highlights that define a microservice:

- Focused Functionality: Each microservice is responsible for a specific, well-defined business function (like order management or inventory tracking).

- Independent Deployment: Microservices can be deployed, updated, and scaled independently.

- Loose Coupling: Microservices interact with one another through lightweight protocols and APIs, minimizing dependencies.

- Decentralized Ownership: Different teams often own and manage individual microservices, promoting autonomy and specialized expertise.

Let’s return to the e-commerce example we covered in the first section. In a microservices architecture, you would have separate services for the product catalog, shopping cart, payment processing, order management, and more. These microservices can be built and deployed separately, fostering greater agility. When a service update is ready, the code can be built, tested, and deployed much more quickly than if it were contained in a monolith.

Monolithic application vs. microservices

Now that we understand monolithic and microservices architectures, let’s compare them side-by-side. Understanding their differences is key for architects making strategic decisions about application design.

| Feature | Monolithic Application | Microservices Architecture |

| Structure | Single, tightly coupled unit | Collection of independent, loosely coupled services |

| Scalability | Scale the entire application | Scale individual services based on demand |

| Agility | Changes to one area can affect the whole system | Smaller changes with less impact on the overall system |

| Technology | Often limited to a single technology stack | Freedom to choose the best technology for each service |

| Complexity | Less complex initially | More complex to manage with multiple services and interactions |

| Resilience | Failure in one part can bring the whole system down | Isolation of failures for greater overall resilience |

| Deployment | Entire application deployed as a unit | Independent deployment of services |

When to choose which

As with any architecture decision, specific applications lend themselves better to one approach over another. The optimal choice between monolithic and microservices depends heavily on several factors, these include:

- Application Size and Complexity: Monoliths can be a suitable starting point for smaller, less complex applications. For large, complex systems, microservices may offer better scalability and manageability.

- Development Team Structure: If your organization has smaller, specialized teams, microservices can align well with team responsibilities.

- Need for Rapid Innovation: Microservices enable faster release cycles and agile iteration, which are beneficial in rapidly evolving markets.

Advantages of a monolithic architecture

While microservices have become increasingly popular, it’s crucial to recognize that monolithic architectures still hold specific advantages that make them a valid choice in particular contexts. Let’s look at a few of the main benefits below.

Development simplicity

Building a monolithic application is often faster and more straightforward, especially for smaller projects with well-defined requirements. This streamlined approach can accelerate initial development time.

Straightforward deployment

Deploying a monolithic application typically involves packaging and deploying the entire application as a single unit, making application integration easier. This process can be less complex, especially in the initial stages of a project’s life cycle.

Easy debugging and testing

With code centralized in a single codebase, tracing issues and testing functionality can be a more straightforward process compared to distributed microservices architectures. With microservices, debugging and finding the root cause of problems can be significantly more difficult than debugging a monolithic application.

Performance (in some instances)

For applications where inter-component communication needs to be extremely fast, the tightly coupled nature of a monolith can sometimes lead to slightly better performance than a microservices architecture that relies on network communication between services.

When monoliths excel

Although microservice and monolithic architectures can technically be used interchangeably, there are some scenarios where monoliths fit the bill better. In other cases, choosing between these two architectural patterns is more based on preference versus a straightforward advantage. When it comes to monolithic architectures, they are often a good fit for these scenarios:

- Smaller Projects: For applications with limited scope and complexity, the overhead of a microservices architecture might be unnecessary.

- Proofs of Concept: A monolith can offer a faster path to a working product when rapidly developing a prototype or testing core functionality.

- Teams with Limited Microservices Experience: If your team lacks in-depth experience with distributed systems, a monolithic approach can provide a gentler learning curve.

Important considerations

It’s crucial to note that as a monolithic application grows in size and complexity, the potential limitations related to scalability, agility, and technology constraints become more pronounced. Careful evaluation of your application, team, budget, and infrastructure is critical to determine if the initial benefits of a monolithic approach outweigh the challenges that might arise down the line.

Let’s now shift our focus towards the potential downsides of monolithic architecture.

Disadvantages of a monolithic architecture

While monolithic programs offer advantages in certain situations, knowing the drawbacks of using such an approach is essential. With monoliths, many disadvantages don’t pop out initially but often materialize as the application grows in scope or complexity. Let’s explore some primary disadvantages teams will encounter when adopting a monolithic pattern.

Limited scalability

The entire application must be scaled together in a monolith, even if only a specific component faces increased demand. This can lead to inefficient resource usage and potential bottlenecks. In these cases, developers and architects are faced with either increasing resources and infrastructure budget or face performance issues in specific parts of the application.

Hindered agility

The tightly coupled components of a monolithic application make it challenging to introduce changes or implement new features. Modifications in one area can have unintended ripple effects, slowing down innovation. Suppose monoliths are built with agility in mind. In that case, this is less of a concern, but as complexity increases, the ability to quickly create new features or improve older ones without major refactoring and testing becomes less likely.

Technology lock-in

Monoliths often rely on a single technology stack. Adopting new languages or frameworks can require a significant rewrite of the entire application, limiting technology choices and flexibility.

Growing complexity and technical debt

As a monolithic application expands, its codebase becomes increasingly intricate and complicated to manage. This can lead to longer development cycles and a higher risk of bugs or regressions. In the worst cases, the application begins to accrue increasing amounts of technical debt. This makes the app extraordinarily brittle and full of non-optimal fixes and feature additions.

Testing challenges

Thoroughly testing an extensive monolithic application can be a time-consuming and complex task. Changes in one area can necessitate extensive regression testing to ensure the broader system remains stable. This leads to more testing effort and extends release timelines.

Stifled teamwork

The shared codebase model can create dependencies between teams, making it harder to work in parallel and potentially hindering productivity. In the rare case where a monolithic application is owned by multiple teams, careful planning must happen. When it comes time to merge features, there’s a lot of time and collaboration that must be available to ensure a successful outcome.

When monoliths become a burden

Although monoliths do make sense in quite a few scenarios, monolithic designs often run into challenges in these circumstances:

- Large-Scale Applications: As applications become increasingly complex, the lack of scalability and agility in a monolith can severely limit growth potential.

- Rapidly Changing Requirements: Markets that demand frequent updates and new features can expose the limitations of monolithic architectures in their ability to adapt quickly.

- Need for Technology Diversification: If different areas of your application would enormously benefit from various technologies, the constraints of a monolith can become a roadblock.

Transition point

It’s important to continually assess whether the initial advantages of a monolithic application still outweigh its disadvantages as a project evolves. There often comes a point where the complexity and evolving scalability requirements create a compelling case for the transition to a more modular, scalable microservices architecture. If a monolithic application would be better served with a microservices architecture, or vice versa, jumping to the most beneficial architecture early on is vital to success.

Now, let’s move on to real-world examples to give you some tangible ideas of monolithic applications.

Monolithic application examples

To understand how monolithic architectures are used, let’s examine a few application types where they are often found and the reasons behind their suitability.

Legacy applications

Many older, large-scale systems, especially those developed several decades ago, were architected as monoliths. Monolithic applications can still serve their purpose effectively in industries with long-established processes and a slower pace of technological change. These systems were frequently built primarily on stability and may have undergone less frequent updates than modern, web-based applications. The initial benefits of easier deployment and a centralized codebase likely outweighed the need for rapid scalability often demanded in today’s markets.

Content management systems (CMS)

Early versions of popular Content Management Systems (CMS) like WordPress and Drupal often embodied monolithic designs. While these platforms have evolved to offer greater modularity today, there are still instances where older implementations or smaller-scale CMS-based sites retain a monolithic structure. This might be due to more straightforward content management needs or less complex workflows, where the benefits of granular scalability and rapid feature rollout, typical of microservices, are less of a priority.

Simple e-commerce websites

Small online stores, particularly during their initial launch phase, might find a monolithic architecture sufficient. A single application can effectively manage limited product catalogs and less complicated payment processing requirements. For startups, the monolithic approach often provides a faster path to launching a functional e-commerce platform, prioritizing time-to-market over the long-term scalability needs that microservices address.

Internal business applications

Applications developed in-house for specific business functions (like project management, inventory tracking, or reporting) frequently embody monolithic designs. These tools typically serve a well-defined audience with a predictable set of features. In such cases, the overhead and complexity of a microservices architecture may need to be justified, making a monolith a practical solution focused on core functionality.

Desktop applications

Traditional desktop applications, especially legacy software suites like older versions of Microsoft Office, were commonly built with a monolithic architecture. All components, features, and functionalities were packaged into a single installation. This approach aligned with the distribution model of desktop software, where updates were often less frequent, and user environments were more predictable compared to modern web applications.

When looking at legacy and modern applications of the monolith pattern, it’s important to remember that technology is constantly evolving. Some applications that start as monoliths may have partially transitioned into hybrid architectures. In these cases, specific components are refactored as microservices to meet changing scalability or technology needs. Context is critical – a deep assessment of the application’s size, complexity, and constraints is essential when determining if there is an accurate alignment with monolithic principles.

How vFunction can help optimize your architecture

The choice between modernizing or optimizing legacy architectures, such as monolithic applications, presents a challenge for many organizations. As is often the case with moving monoliths into microservices, refactoring code, rethinking architecture, and migrating to new technologies can be complex and time-consuming. In other cases, keeping the existing monolithic architecture is beneficial, along with some optimizations and a more modular approach. Like many choices in software development, choosing a monolithic vs. microservice approach is not always “black and white”. This is where vFunction becomes a powerful tool to simplify and inform software developers and architects about their existing architecture and where possibilities exist to improve it.

Let’s break down how vFunction aids in this process:

1. Automated Analysis and Architectural Observability: vFunction begins by deeply analyzing the monolithic application’s codebase, including its structure, dependencies, and underlying business logic. This automated analysis provides essential insights and creates a comprehensive understanding of the application, which would otherwise require extensive manual effort to discover and document. Once the application’s baseline is established, vFunction kicks in with architectural observability, allowing architects to actively observe how the architecture is changing and drifting from the target state or baseline. With every new change in the code, such as the addition of a class or service, vFunction monitors and informs architects and allows them to observe the overall impacts of the changes.

2. Identifying Microservice Boundaries: One crucial step in the transition is determining how to break down the monolith into smaller, independent microservices. vFunction’s analysis aids in intelligently identifying domains, a.k.a. logical boundaries, based on functionality and dependencies within the monolith, suggesting optimal points of separation.

3. Extraction and Modularization: vFunction helps extract identified components within a monolith and package them into self-contained microservices. This process ensures that each microservice encapsulates its own data and business logic, allowing for an assisted move towards a modular architecture. Architects can use vFunction to modularize a domain and leverage the Code Copy to accelerate microservices creation by automating code extraction. The result is a more manageable application that is moving towards your target-state architecture.

Key advantages of using vFunction

- Engineering Velocity: vFunction dramatically speeds up the process of improving monolithic architectures and moving monoliths to microservices if that’s your desired goal. This increased engineering velocity translates into faster time-to-market and a modernized application.

- Increased Scalability: By helping architects view their existing architecture and observe it as the application grows, scalability becomes much easier to manage. By seeing the landscape of the application and helping to improve the modularity and efficiency of each component, scaling is more manageable.

- Improved Application Resiliency: vFunction’s comprehensive analysis and intelligent recommendations increase your application’s resiliency and architecture. By seeing how each component is built and interacts with each other, informed decisions can be made in favor of resilience and availability.

Conclusion

Throughout our journey into the realm of monolithic applications, we’ve come to understand their defining characteristics, historical context, and the scenarios where they remain a viable architectural choice. We’ve dissected their key advantages, such as simplified development and deployment in certain use cases, while also acknowledging their limitations in scalability, agility, and technology adaptability as applications grow in complexity.

Importantly, we’ve highlighted the contrasting microservices paradigm, showcasing the power of modularity and scalability it offers for complex modern applications. Understanding the interplay between monolithic and microservices architectures is crucial for software architects and engineering teams as they make strategic decisions regarding application design and modernization.

Interested in learning more? Request a demo today to see how vFunction architectural observability can quickly move your application to a cleaner, modular, streamlined architecture that supports your organization’s growth and goals.

Matt Tanner

Matt is a developer at heart with a passion for data, software architecture, and writing technical content. In the past, Matt worked at some of the largest finance and insurance companies in Canada before pivoting to working for fast-growing startups.