If you spend any time around engineering teams right now, you’ll hear a version of the same question:

“If an LLM can write code, can it refactor our legacy system into something modern?”

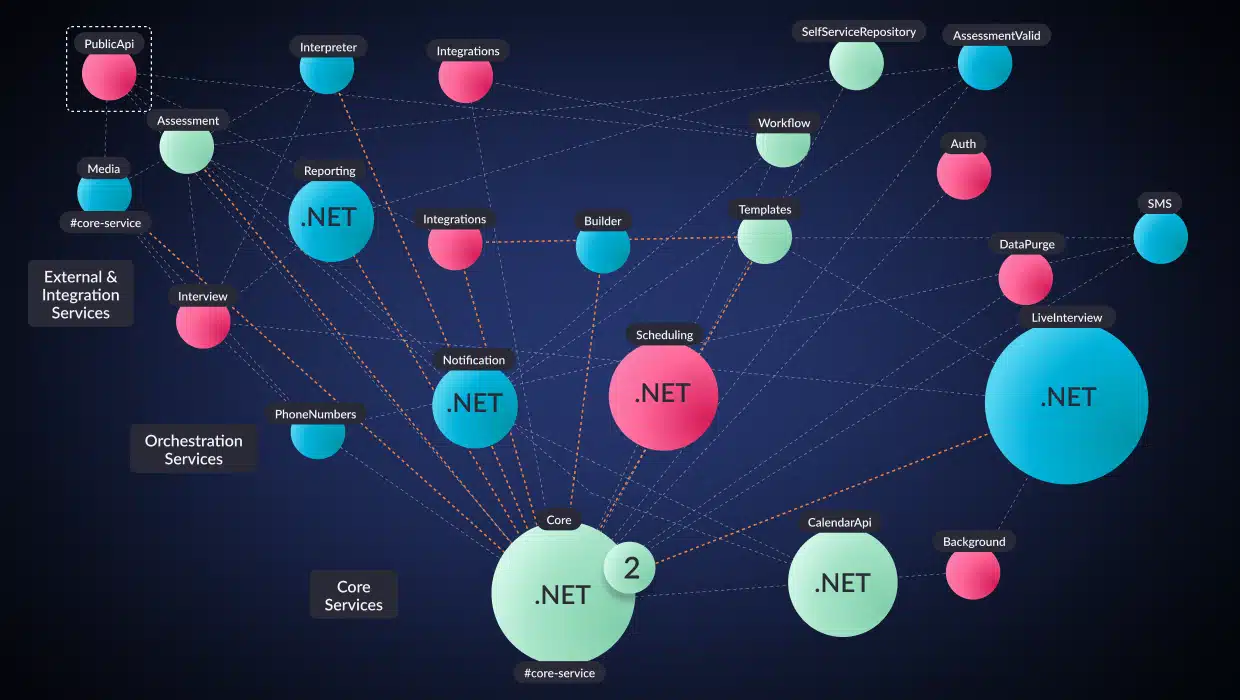

In most enterprise applications, the architecture that once defined the system has eroded over time, and complexity has increased. Service boundaries are unclear. Components are tightly coupled, making them difficult to isolate. Data models span multiple functional areas. Runtime dependencies are poorly understood. Institutional knowledge fades as teams change and systems evolve.

At the same time, these applications continue to power critical operations, leaving little tolerance for risk. Experimentation that might disrupt production is rarely acceptable.

This is where the promise of AI-driven modernization collides with brownfield reality.

Modernizing architecture is an ill-defined transformation problem that requires understanding how a system behaves, determining its logical boundaries, and defining the architecture it should evolve toward, while accounting for operational realities and incorporating human judgment where needed.

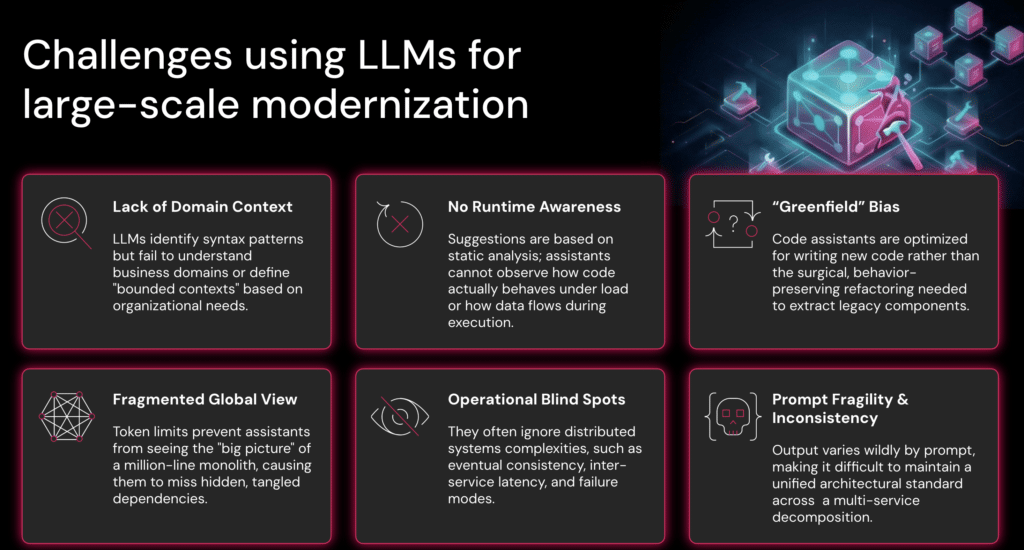

LLM failure modes in application modernization

In a series of recent analyst briefings, we summarized the practical reasons LLMs struggle with the architectural modernization of large, complex applications. They show up the moment a team tries to refactor a mission-critical system where correctness, predictability, and operational behavior matter more than clean, organized output.

Here are the core failure modes—and what they imply for modernization.

1) LLMs don’t have domain context

LLMs can identify syntax and patterns, but they don’t understand the business domains inside the application, nor can they infer “bounded contexts” the way an organization needs them defined.

That last part is the key point engineers tend to learn the hard way: modernization isn’t just code motion. It’s organizational design made executable. Two companies can take the same monolith and decompose it differently because their teams, ownership boundaries, risk tolerance, and customer requirements differ.

An LLM can suggest refactors. It cannot decide what “the right services” are for your business.

2) No runtime awareness. Static suggestions aren’t enough for modernization.

Most code assistants operate on what they can “see” in code. But modernization requires understanding how the system behaves at runtime, how real flows traverse it, and where coupling occurs in production.

Runtime analysis filters out irrelevant static dependencies and reveals which flows actually execute. Static analysis captures all possible dependencies and call paths in the codebase.

Understanding and modernizing a brownfield system requires both.

3) Code assistants are biased toward greenfield work

LLMs are optimized for writing new code. Large-scale modernization is the opposite: surgical, behavior-preserving refactoring across tangled dependencies, obscure edge cases, and long-lived invariants.

In greenfield development, if the assistant gets something slightly wrong, you fix it and move on. In a brownfield system that runs a business, “slightly wrong” can mean:

- Subtle data inconsistency

- Broken reconciliation paths

- Security regressions

- Incidents that only appear at scale

Modernization needs reliability and structure, not just velocity.

4) Token limits create a fragmented “global view”

Even if an assistant is strong at local refactoring, it still can’t hold the whole system in working memory of large systems. Token limits prevent it from seeing the “big picture” of a million-line monolith, not to mention a megalith, which causes it to miss hidden dependencies and coupling.

This is not a small limitation—it’s structural.

Large systems behave like ecosystems: a change in one region can alter stability elsewhere. When you can’t maintain a coherent global model, refactoring becomes a series of local optimizations that often conflict at the system level.

[Link to Welcome to the Megalith]

5) Distributed systems introduce operational blind spots

Even when modernization targets microservices, assistants frequently underweight the hard operational realities: eventual consistency, inter-service latency, failure modes, and backpressure—the need to slow upstream services when downstream systems cannot keep up.

This is where “looks correct” becomes “fails in production.”

Enterprise modernization is inseparable from operational behavior. Refactoring that ignores failure semantics is not modernization, it’s risk.

6) Prompts are fragile, and inconsistency is expensive

In large programs, output variability becomes a management problem. If results vary widely by prompt, it becomes difficult to maintain a unified architectural standard across decomposition workstreams.

This stems from a deeper mismatch. Enterprise systems are deterministic—their behavior must be predictable, repeatable, and governed by clear architectural constraints. Large language models, by contrast, are probabilistic systems. They generate likely outputs based on patterns learned from data, so the same task can produce different results depending on the prompt wording, context, or model behavior.

Teams discover this quickly when they try to scale AI-assisted refactoring:

- Different developers get different answers for the same task

- Small wording changes alter code structure dramatically

- “Best practices” drift across services

- Reviewers spend more time normalizing output than modernizing

In small experiments, this variability may be tolerable. But at enterprise scale—where dozens of teams may be refactoring thousands of components—it becomes a serious problem.

Enterprise software depends on consistent architectural decisions. When AI outputs vary across workstreams, that variability compounds. And compounding inconsistency becomes architectural debt.

So what does work? Architectural context + deterministic guidance

The conclusion we’ve come to is pragmatic:

LLMs are valuable execution engines, but they need architectural context and constraints to modernize predictably.

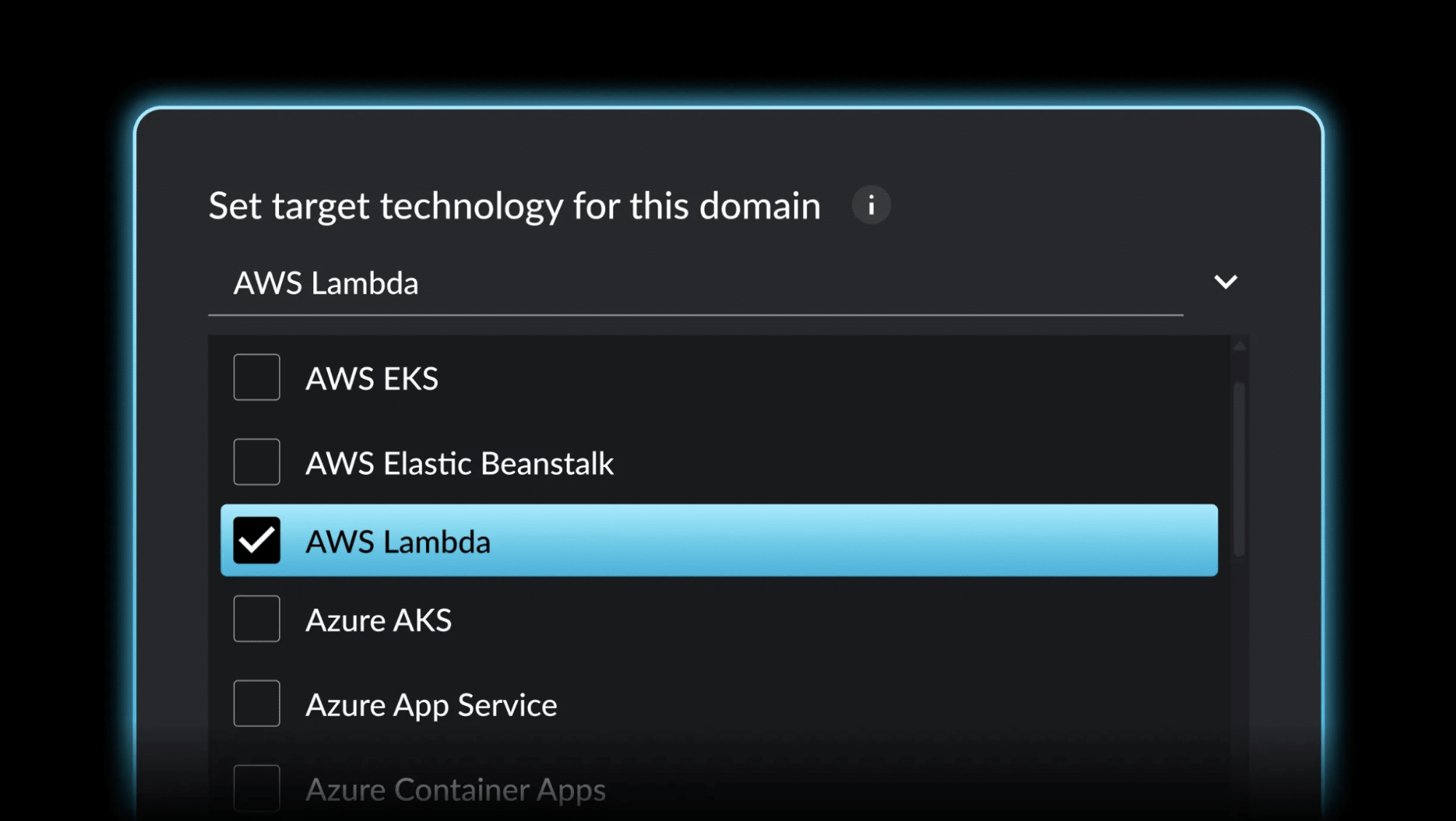

In our briefings, the model we laid out is a data-driven approach to generating that context: combining runtime data, binary/static analysis, data science/ML, interactive target definition (human-in-the-loop), and a feedback loop.

Why those ingredients?

- Runtime + analysis provides system truth, not assumptions.

- Data science / ML helps infer logical domains and coupling patterns humans can’t manually map at scale.

- Interactive target definition ensures the end state reflects business and org reality (because no model can “guess” it correctly).

- Feedback loops verify whether changes achieved the intended outcome and keep modernization grounded in reality, not hope.

This is also where “deterministic vs. probabilistic” matters in practice. An LLM is probabilistic by nature. The way to use it safely for modernization is to surround it with deterministic structure: clear tasks, explicit constraints, edge cases, guardrails, and validation.

In other words: don’t ask an LLM to modernize your architecture. Ask it to execute well-specified refactoring steps that are derived from real, pre-calculated, and defined architectural context.

The mindset shift: from “generate code” to “orchestrate refactoring”

When teams apply LLMs to brownfield modernization successfully, developers stop being prompt authors and become orchestrators:

- selecting the right transformation step

- applying it in the right sequence

- validating behavior at each stage

- measuring progress against architectural goals

This is the difference between “AI wrote some code” and “AI helped us modernize a mission-critical system without breaking it.”

At vFunction, this orchestration starts with understanding the system’s architecture. From that analysis, vFunction generates a structured modernization plan: a prioritized set of refactoring tasks designed to modularize the application and move it toward a target architecture that supports the company’s needs today while enabling the next wave of innovation, whether that’s cloud platforms or AI in the application layer.

Those tasks can then be executed with the help of modern code assistants such as Amazon Kiro, Cursor, or GitHub Copilot. Instead of asking an LLM to refactor an entire system blindly, developers guide it with precise, architecture-aware, engineered prompts and clearly defined transformation steps.

In practice, this allows AI to operate within the boundaries of the system’s architecture, making modernization far more predictable and scalable.

The result is a workflow where architectural insight and AI-assisted development reinforce each other: the system is understood first, the refactoring path is defined, and code assistants help execute the work safely and efficiently.

It’s about transforming complex systems without breaking the businesses that depend on them.

And that requires more than an LLM.

It requires architectural understanding first and AI second.