Architectural observability demo

Watch NowGartner® Report: Tips for CIOs to Jump-Start Stalled Application Modernization Initiatives

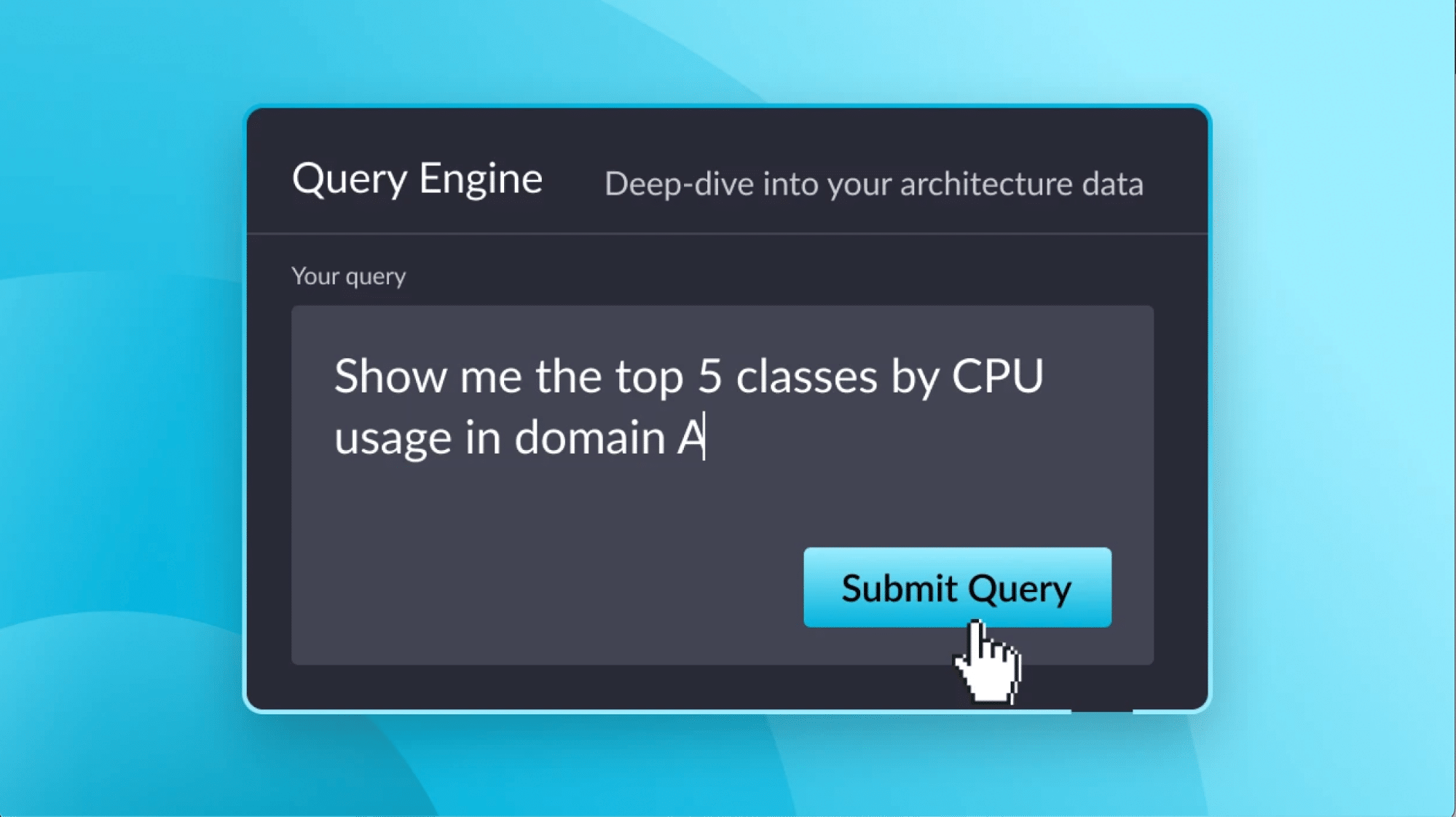

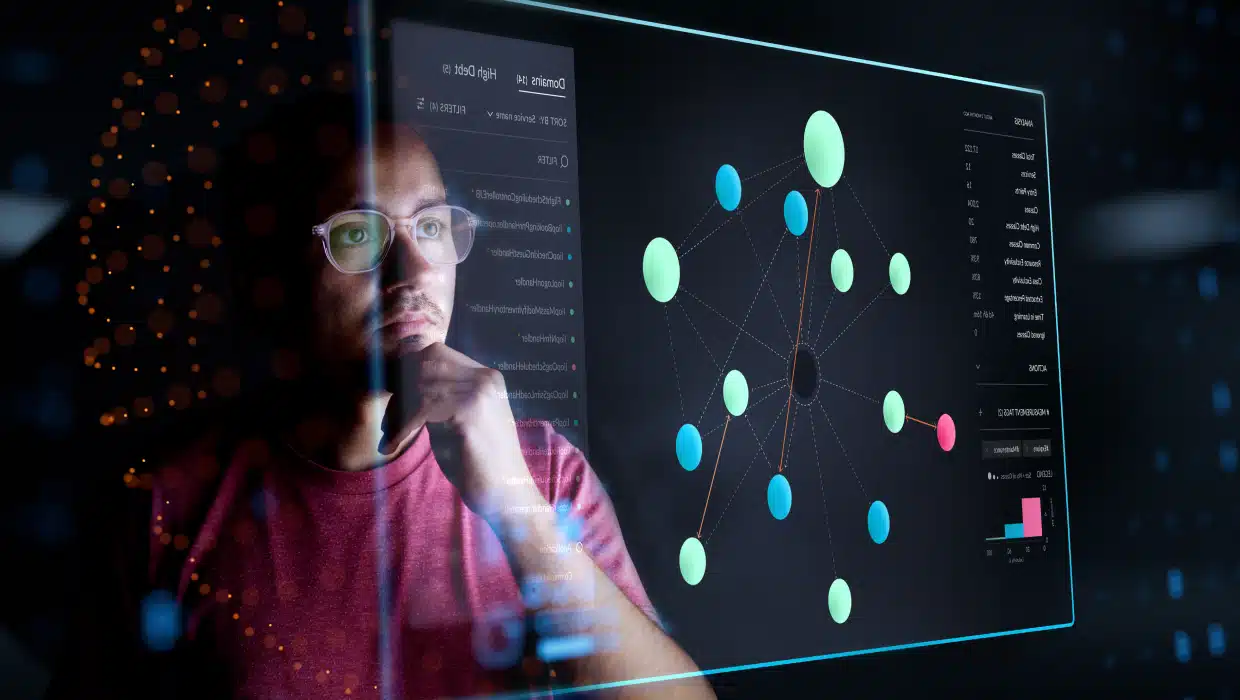

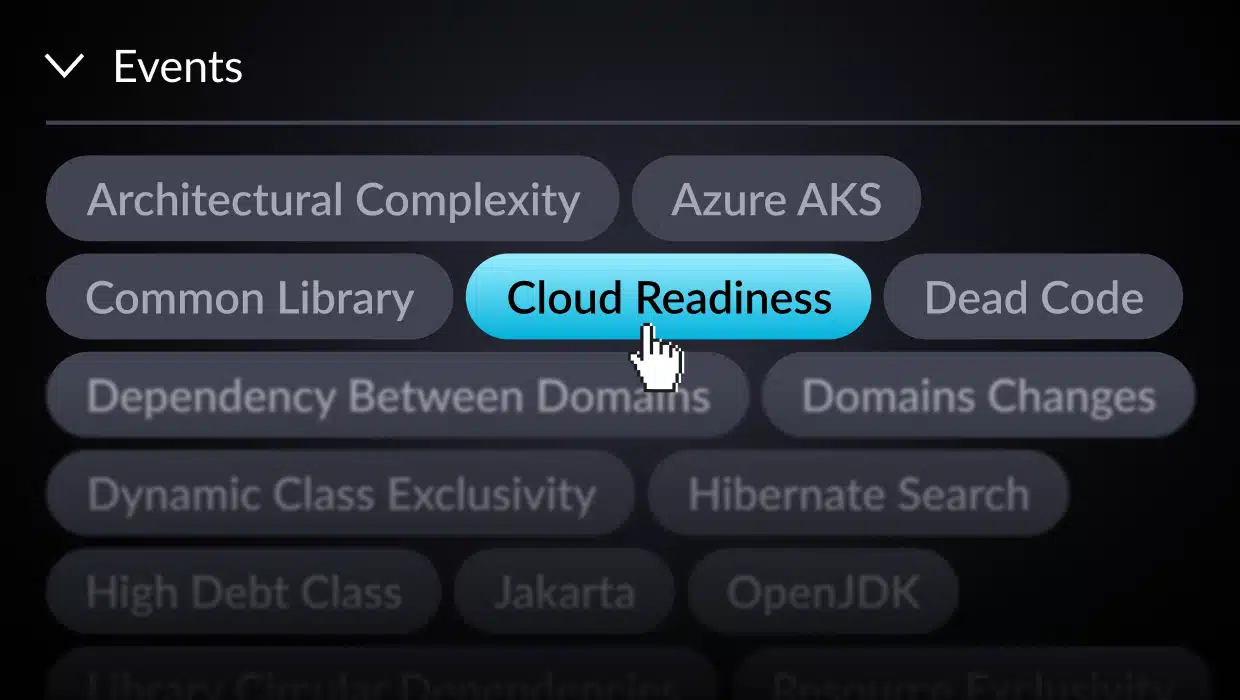

Download NowWhat if you could talk to your app’s architecture the way you talk to your favorite LLM? “Show me the top 5 classes by CPU usage in domain A,” “Find […]

Sign up for vFunction's weekly newsletter to hear the latest news, product announcements, and access exclusive content.

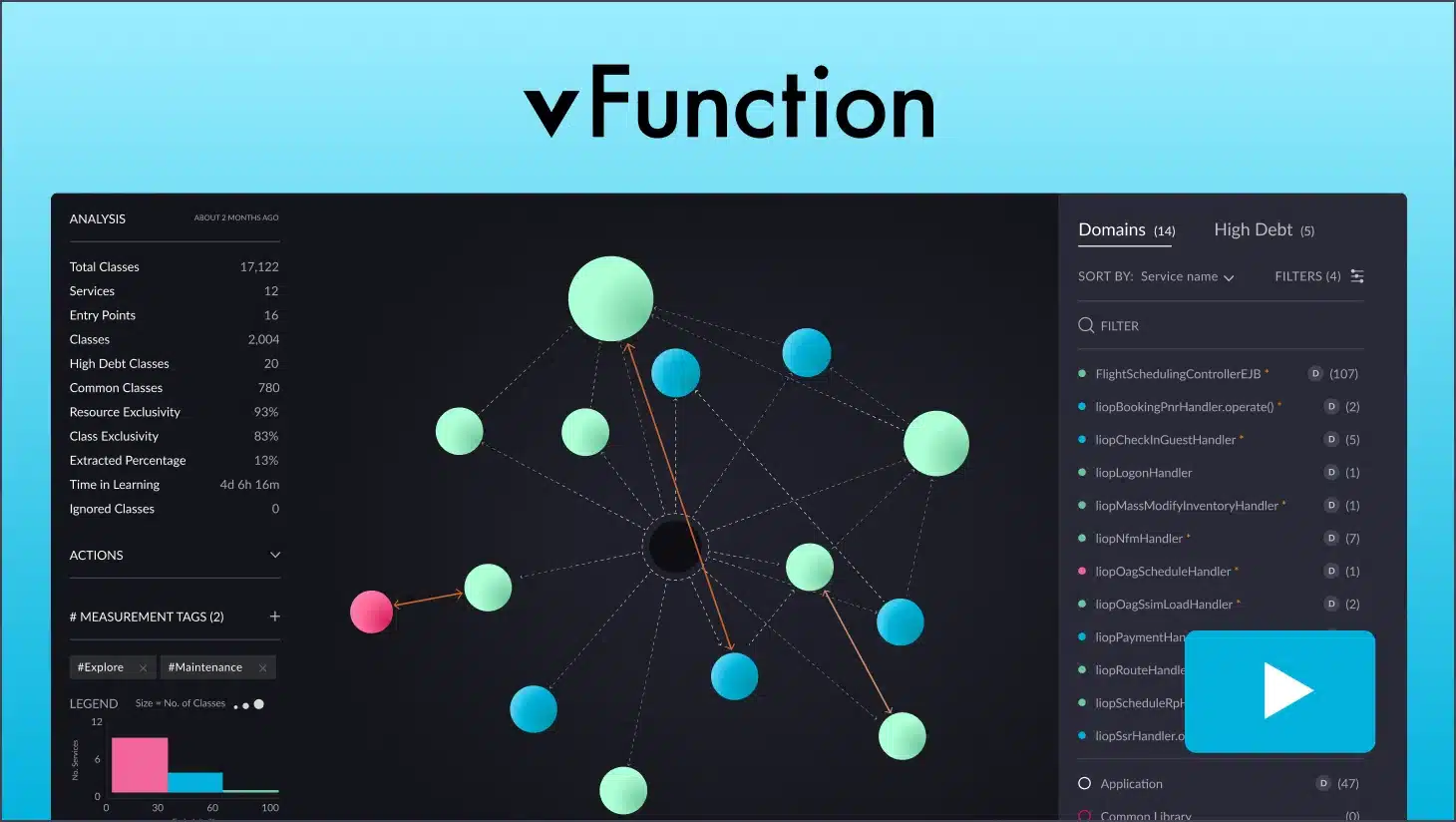

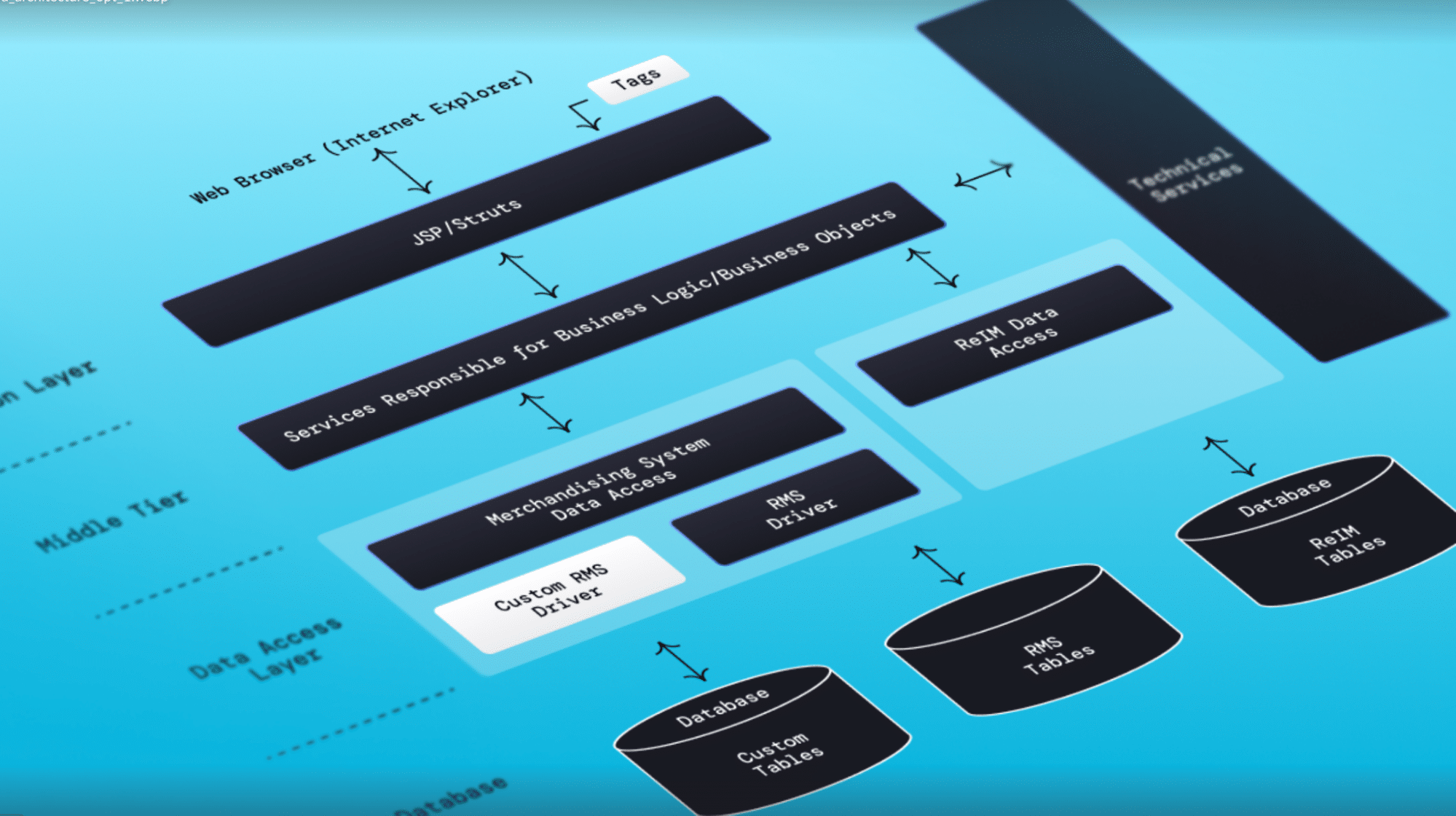

Discover how vFunction accelerates modernization and boosts application resiliency and scalability.