Remember when we would build applications and have everything working perfectly on our local machine or development server, only to have it crumble as it moved to higher environments, i.e., from dev and testing to pre-prod and production? These challenges highlighted the need for containerization software to streamline development and ensure consistency across environments.

As we pushed towards production, software development’s “good old days” were plagued with a dreaded mix of compatibility issues, missing dependencies, and unexpected hiccups. These scenarios are an architect and developer’s worst nightmare. Luckily, technology has improved significantly in the last few years, including tools that allow us to move applications from local development to production seamlessly. Part of this new age of ease and automation is thanks to containerization. This technology has helped to solve many of these headaches and streamline deployments for many modern enterprises.

Whether you’re introducing containers as part of an application modernization effort or building something net-new, in this guide, we’ll explain the essentials of containerization in a way that’s easy to understand. We’ll cover what it is, why it’s become so popular, and containerization software’s influential role and advantages. We’ll also compare containerization to the familiar concept of virtualization, address security considerations, and explain how vFunction can help you adopt containerization as part of your architecture and software development life cycle (SDLC). First, let’s dig a bit further into the fundamentals of containerization.

What is containerization?

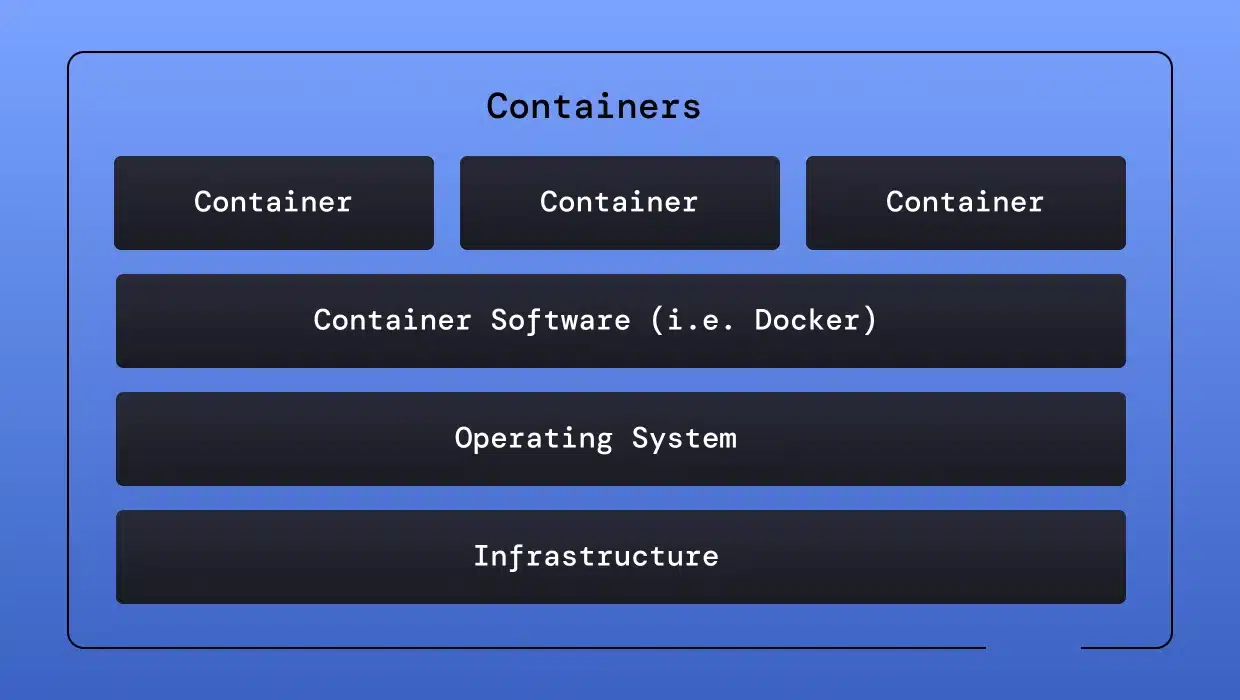

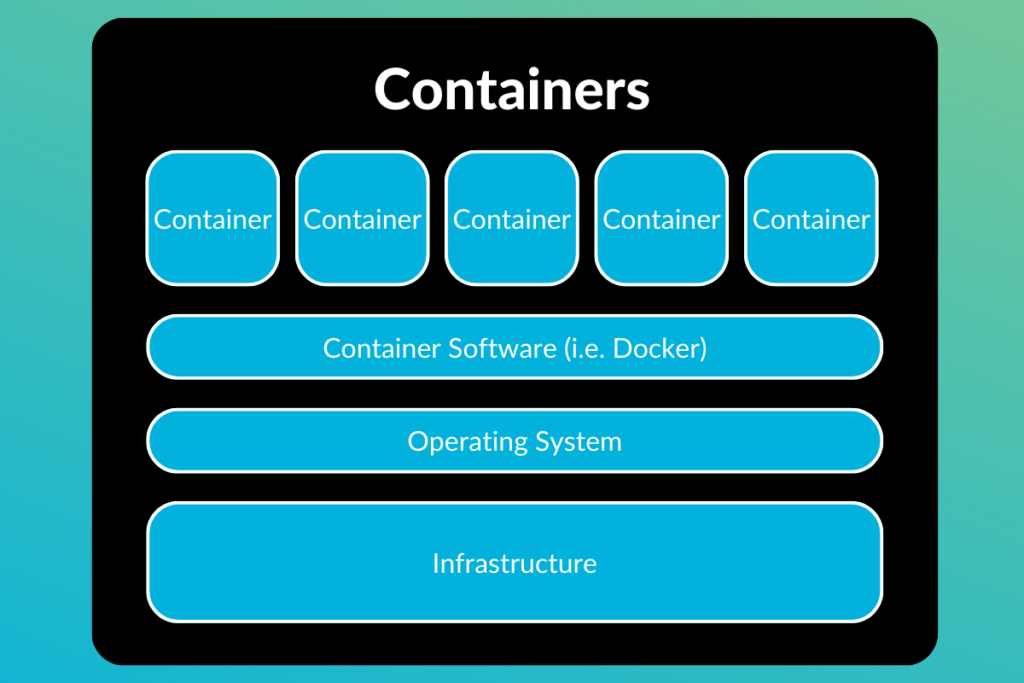

Containerization involves bundling an application and its entire runtime environment into a standalone unit called a container. But what is a software container exactly? It’s a lightweight, portable, and self-sufficient environment that allows applications to run consistently across different systems. This runtime environment includes the application’s code, libraries, configuration files, and any other dependencies it needs. Containers act as miniature, isolated environments that enable applications to run consistently across different computing environments.

For organizations and developers that adopt containerization, it streamlines software development and deployment, making the process faster, more reliable, and resource-efficient. Traditionally, when deploying an application, you had to spin up a server, configure the server accordingly, and install the application and any dependencies for every environment you were rolling the software out to. With containerization, you can do this once and then run wherever necessary.

What is containerization software?

Containerization software provides the essential tools and platforms for building, running, and managing containers, making it an integral part of containerization development. Let’s review some of its core functions.

Container image creation: Containerization software helps you define the contents of your container image. A container image is a snapshot of your application and its dependencies packaged into a standardized format. You create these images by specifying your application’s components, the base operating system, and any necessary configurations.

Container runtime: The container runtime engine provides the low-level machinery necessary to execute your containers. Container engines are responsible for isolating the container’s processes and resources, ensuring containers run smoothly on the host operating system.

Container orchestration: As your application grows and you use multiple containers, managing them manually becomes challenging. Container orchestration software automates complex tasks like scaling, scheduling, networking, and self-healing of your containerized applications.

Container registries: Think of registries as libraries or repositories for storing and sharing your container images. They enable easy distribution of container images across different development, test, and production environments.

The overview above should give you a high-level grasp of the components within a containerized ecosystem. With some of the terminology used, it may also be hard to discern the difference between containerization and virtualization. In the next section, let’s explore the difference between virtualization and containerization and why this distinction matters.

Virtualization vs. containerization

While virtualization and containerization aim to improve efficiency and flexibility in managing IT resources, they function at different levels (hardware vs. software) and have different purposes. Understanding the distinction is crucial in choosing the right solution for your needs. These solutions are often used together to create scalable solutions that are easier to deploy and manage.

When it comes to virtualization, the key factor is that it operates at the hardware level. A hypervisor, a virtual machine monitor or virtualizer, creates virtual machines (VMs) on a physical server. Each VM encapsulates a complete operating system (OS), its applications, libraries, and the entire hardware stack, making VMs excellent for running multiple, diverse operating systems on a single physical machine.

On the other hand, containerization systems operates at a machine’s operating system level. Containers share the host machine’s OS kernel and only package the application, its dependencies, and a thin layer of user space. This makes them significantly more lightweight and faster to spin up than VMs. In many cases, VMs will have containerization software deployed on them and the virtual machine will host multiple containers. Mini-VMs inside of VMs, if you think of it in simple terms.

Key differences

The best way to see the differences is to break things down into a simple chart. Below, we will look at some of the critical features of both approaches and the differences between virtualization and containerization.

| Feature | Virtualization | Containerization |

| Scope | Emulates full hardware stack | Shares host OS |

| Isolation | Strong isolation – separate operating systems | Process-level isolation within the shared operating system |

| Resource Overhead | Higher due to multiple guest OS | Lower, minimal overhead |

| Startup Speed | Slower | Near-instant |

| Use Cases | Running diverse workloads, legacy applications | Microservices, cloud-native applications, rapid scaling across multiple environments |

When to choose which

Which approach should you choose for your specific use case? There are a few factors to consider, and both can often be used. However, certain advantages come with using one over the other.

Virtualization is best when strong isolation is a priority, applications must run across multiple operating systems, or you must consider replatforming legacy systems. Many large enterprises still rely heavily on virtualization software, which is why Microsoft, VMWare, and IBM’s virtualization software is still heavily invested in.

Containerization is ideal for microservices architectures, applications built for the cloud, and scenarios where speed, efficiency, and scalability are paramount. If teams are deploying applications across multiple servers and environments, it may be easier and more reliable to go with containers, likely running inside a virtualized environment.

Overall, most organizations will use a mix of both technologies. You may run a database on virtual machines and run corresponding APIs that interact with them across a cluster of containers. The variations are almost endless, leaving the decision of what to virtualize and what to containerize up to the best judgment of developers and architects.

Types of containerization

The world of containerization extends beyond specific brands or technologies, such as Docker containers and Kubernetes. Depending on the use case and architectures within a solution, a variety of containerization types may be an optimal choice. Let’s look at two of the main types of containerization commonly used.

OS-level containerization

At the heart of OS-level containerization software lies the concept of sharing the host operating system’s kernel. Containers isolate user space, bundling the application with its libraries, binaries, and related configuration files, enabling it to run independently without requiring full-fledged virtual machines. Linux Container technology (LXC), Docker containers, and other technologies belonging to the Open Container Initiative (OCI) typify this approach. Use cases for OS-level containerization include:

- Microservices architecture: Breaking down complex applications into smaller, interconnected services running in their own containers, promoting scalability and maintainability.

- Cloud-native development: Building and deploying applications designed to run within cloud environments, leveraging portability and efficient resource utilization.

- DevOps and CI/CD: Integrating containers into development workflows and pipelines to accelerate development and deployment cycles.

Application containerization

Application containerization encapsulates applications and their dependencies at the application level rather than the entire operating system. This type of containerization offers portability and compatibility within specific platforms or application ecosystems. Consider these examples:

- Windows Containers: Enable packaging and deployment of Windows-based applications within containerized environments, maintaining consistency across Windows operating systems.

- Language-Specific Containers: Technologies exist to containerize applications written in specific languages like Java (e.g., Jib) or Python, streamlining packaging and deployment within their respective runtime environments.

Choosing the correct type of containerization for your use case depends heavily on your application architecture, operating system requirements, and your organization’s security needs. Next, Let’s dig deeper into how containerization software operates behind the scenes.

How does containerization software work?

Under the hood, containerization software is a delicate balance of isolation and resource management. These two pieces are crucial in making the magic of containers happen. Let’s break down the key concepts that make containerization software tick.

Container images: The foundation of containerization rests on the container image. It’s a read-only template that defines a container’s blueprint. It is a recipe containing instructions to create an environment, specify dependencies, and include the application’s code.

Namespaces: Linux namespaces are at the heart of container isolation. They divide the operating system’s resources (like the filesystem, network, and processes) and present each container with its own virtual view, creating the illusion of an independent environment for the application within the container.

Control groups (cgroups): Cgroups limit and allocate resources for containers and are core to container management. They ensure that a single container doesn’t consume all available CPU, memory, or network bandwidth, preventing noisy neighbor problems and maintaining fair resource distribution.

Container runtime: The container runtime engine, the core of containerization software, handles the low-level execution of containers. It works with the operating system to create namespaces, apply cgroups, and manage the container’s lifecycle from creation to termination.

Layered filesystem: Container images employ a layered filesystem, optimizing storage and improving efficiency. Sharing base images containing common components and storing only the differences from the base layer in each container accelerates image distribution and container startup.

When it all comes together, containerization software combines a clever arrangement of operating system features with a container image format and a runtime engine. It creates portable, isolated, and resource-efficient environments for applications to run within, making developers’ and DevOps’ lives easier.

Benefits of Containerization

Compared to traditional methods of deploying and running software, containers offer many unique advantages. Let’s take a look at the overarching benefits of containerization.

Portability: Containers package everything an application needs for execution, enabling seamless movement between environments. This portability is one of the key advantages of containerized software, allowing applications to be transferred from development to production without compatibility issues. Write code once and deploy it across your laptop, on-premises servers, or cloud platforms with minimal or no modifications.

Consistency: Containers eliminate the frustrating inconsistencies that often arise when you deploy an application across different environments. Your containerized application is guaranteed to run the same way everywhere, fostering reliability and predictability.

Efficiency: Unlike virtual machines that emulate entire operating systems, containers share the host OS kernel, significantly reducing overhead. They are lightweight, start up in seconds, and consume minimal resources.

Scalability: You can easily scale containerized applications up or down based on demand, providing flexibility to meet fluctuating workloads without complex infrastructure management.

Microservices architecture: Containers are an excellent fit for building and deploying microservices-based applications in which different application components run as separate, interconnected containers, facilitating the transition from monolith to microservices.

Containerization offers benefits across the software development lifecycle, promoting faster development cycles, enhanced operational efficiency, and the flexibility to support modern, cloud-native architectures. However, one area that sometimes comes under scrutiny is handling security within containerized environments. Next, let’s look at some of the concerns and remedies for common containerization security issues.

Containerization security

As we have seen, containerization offers numerous advantages. But, it would be unfair not to mention some potential security implications of adopting containers into your architecture. Let’s look at a few areas to be mindful of when adopting containerization.

Image vulnerabilities

Just like any other software, container images can harbor vulnerabilities within their software components. These vulnerabilities can stem from outdated libraries, unpatched dependencies, or even programming errors within your application code. A complete security strategy should include a process for regularly scanning container images for known vulnerabilities using vulnerability scanners explicitly designed for container environments. These scanners compare the image’s components against vulnerability databases and alert you to potential risks. Once identified, promptly applying any necessary patches or updates to the image is critical to mitigating potential vulnerabilities.

Container isolation

While containers provide a degree of isolation from each other through namespaces and control groups, they all share the underlying operating system kernel. This means that a vulnerability in the kernel or a successful container breakout attempt could have far-reaching consequences for the host system and other containers running on it. A container breakout attempt is when an attacker exploits a vulnerability in the container runtime or the host system to escape the confines of the container, leading to unauthorized access to the host machine’s resources or other containers. Security best practices like keeping the host operating system and container runtime up-to-date with the latest security patches are crucial to minimize the risk of kernel vulnerabilities. Additionally, security features like SELinux or AppArmor can provide additional isolation layers to harden your container environment further.

Expanded attack surface

Containerized applications, particularly those built using a microservices architecture, often involve complex interactions and network communication patterns. Each microservice may communicate with several other services, and these communication channels can introduce new attack vectors. For instance, an attacker might exploit a vulnerability in one microservice to gain a foothold in the system and then pivot to other services to escalate privileges or steal sensitive data. It’s essential to carefully map out the communication channels between your microservices and implement security measures like access controls and network segmentation to limit the impact of a potential attack.

Runtime security

The security of the container runtime itself is paramount. Misconfigurations or vulnerabilities within the container engine could give attackers a foothold to gain unauthorized access to containers or the host system. Regular security audits and updates of the container runtime are essential. Additionally, following recommended security practices for configuring the container runtime and container engine can help mitigate risks.

Security best practices

The list can get quite extensive when it comes to applying some of the learning from above and considering application security best practices. Here are a few of the best practices that developers should aim to apply when utilizing containerization for their applications:

- Minimize image size: Smaller container images have a reduced attack surface. Include only the essential libraries and dependencies required by your application.

- Vulnerability scanning: Implement regular scanning of container images at build time and within container registries to detect and address known vulnerabilities.

- Least privilege: Following the Principle of Least Privilege (PoLP), run containers with the minimum necessary privileges to reduce the impact of a potential compromise.

- Security monitoring: Monitor containerized software for unusual behavior and potential security incidents. Use additional software to implement intrusion detection and response mechanisms.

- Container orchestration security: Pay close attention to security configurations within your container orchestration tools. Always opt for defaults unless you know exactly what consequences a non-default configuration may have.

Containerization security is a shared responsibility that should be considered by developers, DevOps, architects, and everyone else involved within the SDLC. It requires proactive measures, ongoing vigilance, and specialized security tools designed for containerized environments. Early attention to container security, well before apps have the chance to make it to production environments, is also critical.

How vFunction can help with containerization

It’s easy to see why containerization is such a powerful driver for application modernization. Successful adoption of containerization hinges on understanding your existing application landscape and intelligently mapping out a strategic path toward a container-based architecture.

This is where vFunction and architectural decisions around containerization go hand-in-hand. Here are a few ways that vFunction can help:

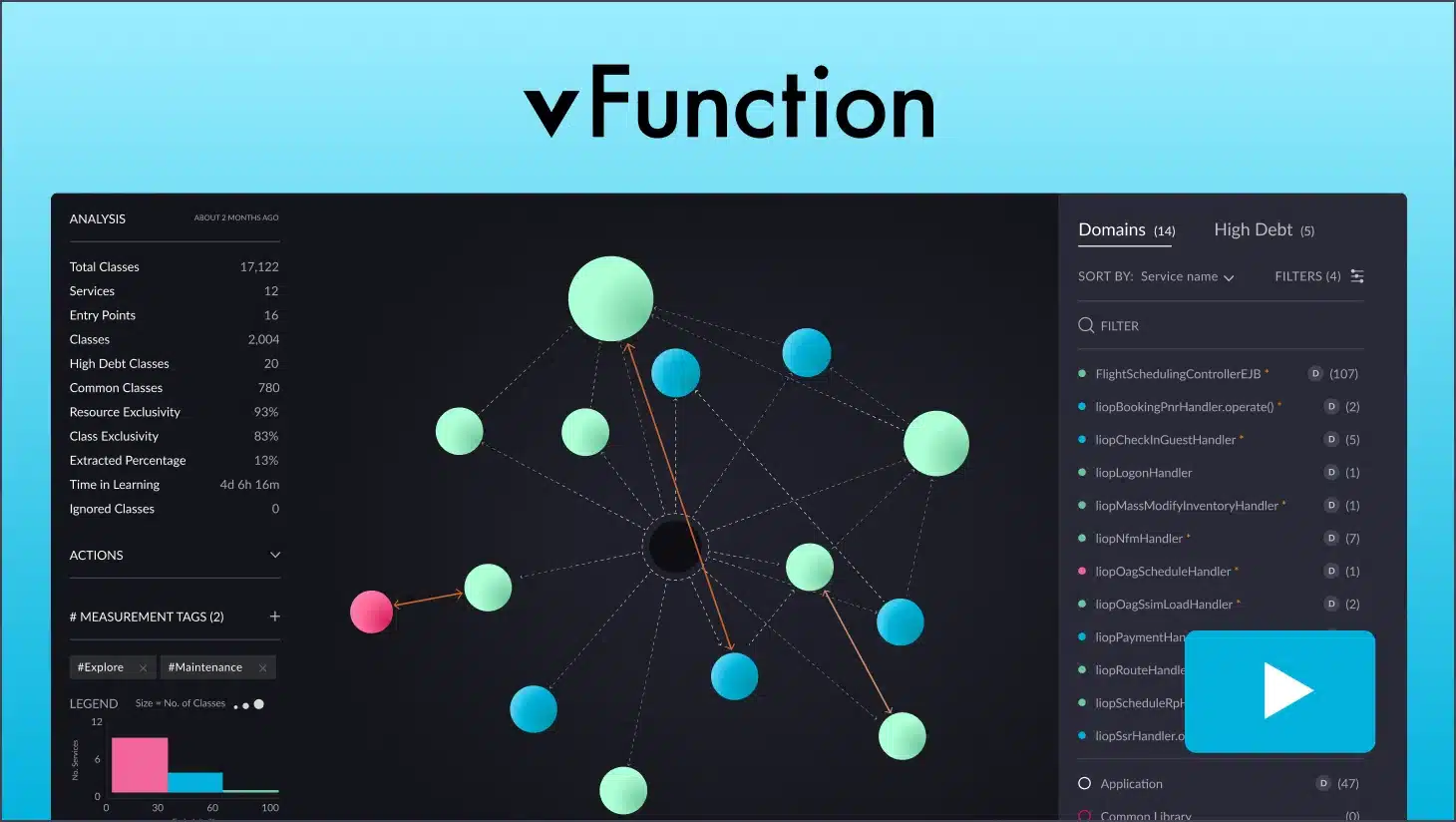

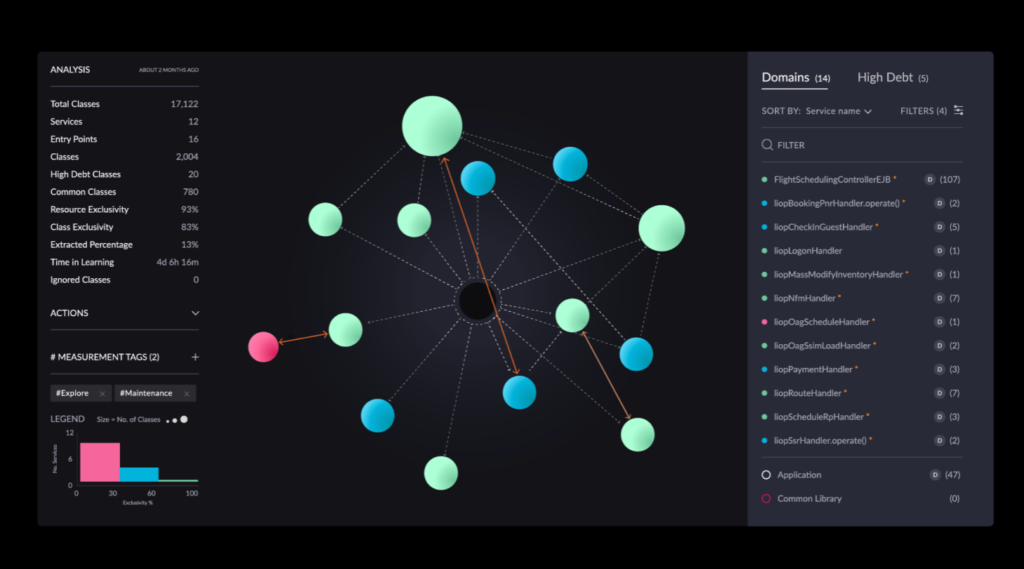

Architectural clarity for containerization: vFunction’s automated analysis of your application codebase offers a blueprint of its structure, dependencies, and internal logic, providing insights into technical debt management. This deep architectural understanding informs the best approach to containerization. Which components of your application are ideal candidates for becoming standalone containers within a microservices architecture? vFunction’s insights provide architects with insights to aid in this decision.

Mapping microservice boundaries: If your modernization strategy involves breaking down a monolithic application into microservices, vFunction assists by identifying logical domains within your code based on business functionality and interdependencies. It reveals natural points where the application can be strategically divided, setting the stage for containerizing these components as independent services.

Optimizing the path to containers: vFunction can help you extract individual components or domains from your application and modularize them. When combined with vFunction’s architectural observability insights, it helps you manage ‘architectural drift’ as you iteratively build out your containerized architecture. It also ensures that any subsequent code changes align optimally with your desired target state.

By seamlessly integrating architectural insights and automation, vFunction becomes a valuable tool in deciding and implementing a containerization strategy, helping you realize up to 5X faster modernization and ensuring your modernization efforts hit the target efficiently and precisely.

Conclusion

Containerization has undeniably revolutionized how we build, deploy, and manage applications. Its ability to deliver portability, efficiency, and scalability makes it an indispensable tool for many modern enterprises. Organizations can embrace this transformation by understanding the core principles of containerization, available technologies, and the benefits of moving to container-based deployments. Containerization should be a key consideration for any new implementations and modernization projects being kicked off.

Ready to start your application modernization journey? vFunction is here to guide you every step of the way. Our platform, expertise, and commitment to results will help you transition into a modern, agile technology landscape. Contact us today to schedule a consultation and discover how we can help you achieve successful application modernization with architectural observability.