Legacy system architectures are quite the challenge when held up to today’s capabilities, and working with them can be frustrating. Software architects head the list of those frustrated because of the numerous struggles they experience with applications designed a decade ago or more.

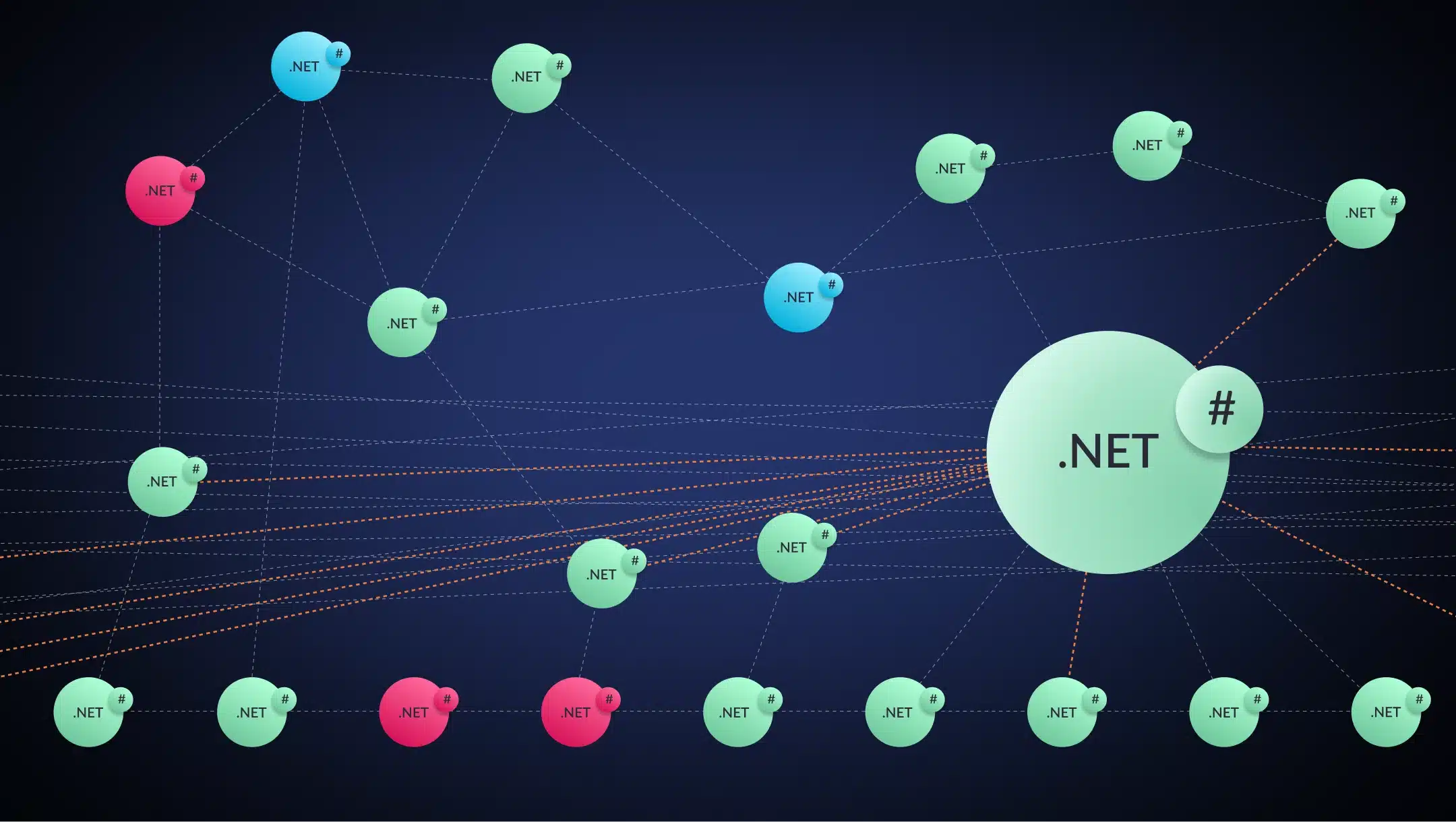

Many of these problems stem from their monolithic architecture. In contrast to today’s architecture, legacy applications contain tight coupling between classes and complex interdependencies, leading to lengthy test and release cycles.

A lack of agility and slow engineering velocity make it onerous to meet customer requirements. Performance limitations imposed by the architecture result in a poor customer experience. Operational expenses are high.

All this leads to a competitive disadvantage for the company and its clients.

Overall, legacy systems are hard to maintain and extend. Their design stifles digital modernization initiatives and hinders the adoption of new technologies like AI, ML, and IOT. Security is another crucial concern. There are no straightforward solutions to these problems. These are just a few reasons architects feel pain with legacy systems.

Let’s examine some issues that legacy systems have and see how modern applications fare in those same areas.

Scalability

The architecture of non-cloud-native applications makes them difficult and expensive to scale. A mature application is still deployed as a monolith, even if parts of it have been “lifted and shifted” to the cloud.

If some parts of the application experience load or performance issues, you cannot scale only those parts. You must scale up the entire application. This would require starting an additional large (or extra-large) compute instance, which can become expensive.

The situation is even more challenging for monolithic applications hosted on on-premise or in data centers. It can take weeks to procure new hardware to scale up. There is no elasticity. Once provisioned, you are stuck with the hardware, even in times of low usage.

Organizations generate data at ever-increasing rates. They must store the data safely and securely in their servers. The cost of acquiring more storage is prohibitive.

Contrast this with a modern application built with microservices. If overall system performance needs a boost, it’s possible to scale only those microservices needed. Because the microservices are decoupled from the monolithic app, it’s possible to use compute instances more efficiently to keep costs from spiraling out of control.

Modern applications hosted on the cloud can add capacity to handle spikes in demand in seconds. Cloud computing offers elasticity. You can automatically free up excess capacity in times of low usage. So, you trade fixed costs (on-premise data centers and servers) for variable expenses based on your consumption. The latter expenditure is low because cloud operators enjoy economies of scale.

Long Release Cycles

Software development on monolithic applications typically involves long release cycles. Teams tend to follow traditional development processes like Waterfall. The product team first documents all change requirements.

All concerned must review and sign off on the changes. The architecture is tightly-coupled, so many groups are involved. They need to exercise due diligence to avoid undesired side effects because of the lack of modularity. Subsequent changes in requirements result in redoing the entire process.

After the developers have made the changes, the QA team tests extensively to ensure that there are no breakages. The release process itself is lengthy. You must deploy the entire application, even if you have changed only a minor part.

If you find any issues post-deployment, you must roll back the release, fix the problem, and repeat the release process. Technical debt makes integrating CI/CD into the legacy application workflow difficult. All this contributes to long release cycles.

Modern application developers, however, follow agile processes. Every team works only on one microservice, which they understand well. Microservices are autonomous and decoupled, enabling teams to work independently. Each microservice can be changed and deployed alone. A failure in one microservice does not impact the whole application.

Microservices can run in containers, making them easier to deploy, test, and port to different platforms. Many teams use DevOps techniques like Continuous Integration and Continuous Development (CI/CD). Consequently, developers make releases quickly, sometimes several times a day.

Most importantly, teams get out of the traditional mindset of long release cycles and into a mode where IT is aligned closely with business priorities.

Accelerating the development process has many advantages. You can go to market faster, gaining a competitive advantage. Customers benefit because they get new features at a rapid clip. The workforce finds more satisfaction in their work.

Long Test Cycles

Legacy applications require a lot of testing effort. There are many reasons for this.

Mature monolithic applications are often poorly documented and understood by only a few employees. As the application ages, the team periodically adds new functionality, but this often happens in a silo, and updates across the organization and documentation are not guaranteed. Hence, the domain knowledge – what functionality the application contains – is not present in all the testers in the team.

This, together with tight coupling and dependencies between different classes in the application, means that testers cannot focus only on testing the changes. They must also verify the functionality far afield because unexpected side-effects could have occurred.

Software crews usually develop legacy applications without writing automated unit test cases, so they don’t have a safety net to rely on when making changes. They must proceed cautiously.

In general, there is no, or insufficient, test automation for monolithic applications. Any automated tests available are developed well after testing, and these only cover a small part of the functionality. Creating automated tests for a legacy application is a long-drawn-out process with an unclear ROI, and it’s rarely taken up. Therefore, testers must manually test everything. For large applications, this could take days or even weeks.

Another common issue with legacy applications is the existence of dead code. Dead code refers to code that is not used anymore but is still lurking in the system. Dead code is problematic on many levels.

It makes it difficult for newcomers to understand the application flow. The inactive code could inadvertently become live with catastrophic results. Dead code is also an indicator of poor development culture.

Hence, testing legacy applications is more of an art– it’s a risky affair.

Testing microservices is a lot easier. Developers write unit tests alongside creating new features. The tests find any breakages that result from code changes. They are repurposed and added to the CI/CD pipelines.

Hence, the process automatically tests all new builds. It is easy to plug gaps in testing, as they are few. The testing time is compressed into the build and deployment cycle and is usually short. Manual testers can focus on exploratory testing. Overall, the product quality is a lot better.

Related: Four Advantages of Refactoring That Java Architects Love

Security and Compliance

Teams working with legacy applications may be unable to implement security best practices and consequently face more vulnerabilities and cybersecurity risks. The application design may make it incapable of supporting features like multi-factor authentication, audit trails, or encryption.

Legacy applications can also pose a security threat because they use outdated technology versions that their manufacturers or vendors no longer support (or recommend updating). Security updates are vital to keeping systems secure. Interdependencies may make it impossible to upgrade from unsupported older operating systems and other software. Therefore, the IT team may not be able to address even known security issues.

There is extensive documentation on known security flaws in legacy applications and platforms. These systems are no longer supported, hence don’t receive patches and updates. They are particularly exposed. Hackers are well aware of their vulnerabilities and can attack them.

If a security breach happens, damage to a company’s reputation takes ages to repair. The public perception that your brand is unsafe never goes away completely.

Many countries have introduced privacy standards like GDPR to protect personal data. Non-compliance with these standards can cause hefty penalties and fines. Hence, organizations must modernize their legacy systems to adhere to these requirements. They must change how they acquire, store, transmit and use personal data. It is a hard task.

Modern applications live on the cloud. Cloud providers make substantial investments to offer state-of-the-art security. They comply with all security requirements prescribed by regulators, like PCI, SOC1, and SOC2. They also promptly apply the latest security patches to all their systems.

Related: “Java Monoliths” – Modernizing an Oxymoron

Inability to Meet Business Requirements

We have seen that legacy applications cannot meet customer demands on time because of long and unpredictable testing and release cycles. Additionally, the monolithic architecture does not scale efficiently when demand surges. Maintaining legacy applications is costly, laborious, and can begin to take a toll on team morale. It is not easy to find people who are interested or skilled in working with these aging technologies. You must build any new functionality you want to offer yourself, like analytics or IOT.

All this results in customer dissatisfaction. Clients look for more nimble and agile alternatives. Lack of performance, reliability, and agility, plus high costs, cause a loss of competitive advantage. They impact productivity and profitability both in your organization and for the products and services you deliver to your customer. You cannot meet your business goals.

The ability to respond swiftly to changing conditions is a key differentiator. IT leaders would like to increase agility and provide better quality service while reducing cost and risk.

Companies with cloud-native applications have a significant advantage because their deployment processes are automated end-to-end. They can release code into production hundreds or even thousands of times every day. So, they can rapidly offer their customers new features.

Cloud providers offer several built-in services that you can leverage. AWS, for instance, provides over 200 services like computing, storage, networking, security, and databases. You can start using them in minutes for a pay-as-you-use fee and quickly scale up your app’s functionality.

Poor Customer Experience

Today’s consumers expect a high-quality user experience. Exceptional customer experience allows a product to stand out in a crowded marketplace. It leads to higher brand awareness and increases customer retention, Average Order Value (AOV), and Customer Lifetime Values (CLTV).

Consumers presume immediacy and convenience in their interactions with customer service. Whether they are looking for information, wanting to report an issue, or seeking an update on an earlier request, they want fast and accurate answers. A long wait time has a negative impact.

Slow performance and high latency plague legacy applications even during simple transactions. To this, add buggy app experiences and inadequately addressed business requirements. This leads to a poor customer experience.

Legacy systems suffer from compatibility issues. They work with data formats that may be out of fashion or obsolete. For example, they may generate reports as text files instead of PDFs, and may not integrate with monitoring, observability, and tracing technologies.

Customers are now used to accessing services online using a device of their choice at a time that suits them. But most legacy systems don’t support mobile applications. Modern applications can provide 24/7 customer service using AI-powered chatbots.

They provide RPA (Robotic Process Automation) to automate the mechanical parts of a call center employee’s job. Businesses with legacy applications must invest in call centers staffed by expensive personnel or provide support only during business hours.

Legacy applications might serve their customers from on-premise servers located far away. Cloud-based applications can be deployed to servers close to customers anywhere, reducing latency.

Mitigating Architects’ Pains with Legacy Systems

We have seen the pains architects have with legacy systems and how modern applications alleviate these problems. But migrating from a monolith to microservices is an arduous undertaking.

Every organization’s needs are different, and a one-size-fits-all approach won’t work. Architects must start with an assessment of their existing systems. It may be possible to rehost some parts of the application, whereas others will first need refactoring. In addition, they must also implement the process and tool-related modernization changes like containers and CI/CD pipelines.

So, modernizing is a complicated process, especially when done manually and from the ground up.

Instead, it is much more predictable and less risky when performed with an automated modernization platform. A platform-centric approach provides a framework with proven best practices that have worked with multiple organizations. vFunction is an application modernization platform built with intuitive algorithms and an AI engine. It is the first and only platform for developers and architects that automatically decomposes legacy monolithic Java applications into microservices. The platform helps in overcoming all the issues inherent in monolithic applications.

Engineering velocity increases, customer experience improves, and the business enjoys all the benefits of being cloud-native. vFunction enables the reuse of the resulting microservices across many projects. The platform approach leads to a scalable and repeatable factory model. To see how we can help with your modernization needs, request a demo today.