Kubernetes is an open-source platform for automating the deployment, scaling, and management of containerized applications. It is like the operating system of the cloud. A Kubernetes cluster comprises a control plane (or brain) and worker nodes that run your workloads. Here we’ll discuss if it is worth starting the modernization journey with Kubernetes for legacy Java applications.

Start Your App Modernization Journey with Kubernetes for Legacy Java Applications

We can look at how organizations have traditionally deployed applications to understand how Kubernetes is useful.

In earlier days, organizations ran their applications directly on physical servers. This resulted in resource contention and conflicts between applications. One application could monopolize a system resource (CPU, memory, or network bandwidth), starving other applications. So, the performance of those applications would suffer.

One solution to this problem was to run each application on a separate server. But this approach had two disadvantages – underutilization of compute resources and escalating server costs.

Another solution was virtualization. Virtualization involves creating Virtual Machines (VMs). A VM is a virtual computer that is allocated a set of physical resources on the host system, including its own operating system.

A VM runs only one application. Several VMs can run on a server. The VMs isolate applications from each other. Virtualization offers scalability, as one can add or remove VMs when needed. There is also a high utilization of server resources. Hence, it is an excellent solution and is still popular.

Next, containers appeared on the market, the most popular of which is Docker. Containers are like VMs, but share an operating system with other containers. Hence, they are comparatively lightweight. A container is independent of the underlying infrastructure (server). It is portable across different clouds and operating systems. So, containers are a convenient way to package and deploy applications.

In a production environment, engineers must manage the containers running their apps. They must add containers to scale up and replace or restart a container that has gone down. They must regularly upgrade the application. If all this could be done automatically, life would be easier, especially when dealing with thousands or millions of containers.

This is where Kubernetes comes in. It provides a framework that can run containers reliably and resiliently. It automates the deployment, scaling, upgrading, backup and restoration, and general management of containers. Google, the developer of Kubernetes, has used it to deploy millions of containers per hour. In the past few years, Kubernetes has become the number one container management platform.

What is a Kubernetes Operator?

Managing stateful applications running on Kubernetes is difficult. The Kubernetes Operator helps handle such apps. A Kubernetes Operator is an automated method that packages, maintains, and runs a stateful Kubernetes application. The Operator uses Kubernetes APIs to manage the lifecycle of the software it controls.

An Operator can manage a cluster of servers. It knows the configuration details of the applications running on these servers. So, it can create the cluster and deploy the applications. It can monitor and manage the applications, update them with newer versions, and automatically restart them if they fail. The Operator can take regular backups of the application data.

In short, the Kubernetes Operator replaces a human operator who would otherwise have performed these tasks.

How Should You Run Kubernetes?

There are many options for running Kubernetes. Keep in mind that you will not just need to set up the Kubernetes clusters one time, but you’ll also need to make frequent changes and upgrades.

- Host and Manage Your Clusters: You do everything yourself. This will require a significant investment of time and effort.

- Start with Managed Services: The provider installs and runs Kubernetes on their servers and takes care of all administrative overheads. You get a fully working, production-grade cluster in minutes. It will only cost you a few dollars a day. There are many good options: Google Kubernetes Engine (GKE), Amazon Elastic Kubernetes Service (EKS), Azure Kubernetes Service (AKS), and IBM Cloud Kubernetes Service.

- Kubernetes Installers: This is a suitable option if you want to self-host Kubernetes. Installer tools and services help you set up and manage your Kubernetes clusters. Popular tools include kOps, Kubeadm, kubespray, Rancher Kubernetes Engine (RKE), and Puppet Kubernetes Module.

- Multi-Cloud Kubernetes Clusters: There are tools available to manage the workload for organizations that deploy apps to the cloud. Popular tools are VMWare Tanzu Mission Control, Red Hat OpenShift, and Google Anthos.

Adopting Kubernetes for Legacy Java Technologies

Let’s look at how using Kubernetes (or Kubernetes Operators) alone, instead of completely modernizing your applications, makes it easier to work with many traditional Java frameworks, application servers, and databases.

Kubernetes for Java Frameworks

Spring Boot, Quarkus, and Micronaut are popular frameworks for working with Java EE applications in a modern way.

Using Spring Boot with Kubernetes

Spring Framework is a popular, open-source, enterprise-grade framework for creating standalone, production-ready applications which run on the Java Virtual Machine (JVM). Spring Boot is a tool that uses Spring to build web applications and microservices quickly and easily. It allows you to create a Spring app effortlessly.

Deploying a Spring Boot application to Kubernetes involves a few simple steps:

1. Create a Kubernetes cluster, either locally or on a cloud provider.

2. If you have a Spring Boot application, clone it in the terminal. Otherwise, create a new application. Make sure that the application has some HTTP endpoints.

3. Build the application. A successful build results in a JAR file.

4. Containerize the application in Docker using Maven, Gradle, or your favorite tool.

5. You need a YAML specification file to run the containerized app in Kubernetes. Create the YAML manually or using the kubectl command.

6. Now deploy the app on Kubernetes, again using kubectl.

7. You can check whether the app is running by invoking the HTTP endpoints using curl.

Check out the Spring documentation for a complete set of the commands needed.

Using Quarkus with Kubernetes:

Quarkus aims to combine the benefits of the feature-rich, mature Java ecosystem with the operational advantages of Kubernetes. Quarkus auto-generates Kubernetes resources based on defaults and user-supplied configuration for Kubernetes, OpenShift, and Knative. It creates the resource files using Dekorate (a tool that generates Kubernetes manifests).

Quarkus then deploys the application to a target Kubernetes cluster by applying the generated manifests to the target cluster’s API Server. Finally, Quarkus can create a container image and save it before deploying the application to the target platform.

The following steps describe how to deploy a Quarkus application to a Kubernetes cluster on Azure. The steps for deploying it on other cloud platforms are similar.

1. Create a Kubernetes cluster on Azure.

2. Install the Kubernetes CLI on your local computer.

3. From the CLI, connect to the cluster using kubectl.

4. Azure expects web applications to run on Port 80. Update the Dockerfile.native file to reflect this.

5. Rebuild the Docker image.

6. Install the Azure Command Line Interface.

7. Either deploy the container image to a Kubernetes cluster, or

8. Deploy the container image to Azure App Service on Linux Containers (this option provides scalability, load-balancing, monitoring, logging, and other services).

Quarkus includes a Kubernetes Client extension that enables it to unlock the power of Kubernetes Operators.

Using Kubernetes with Micronaut

Micronaut is a modern, full-stack Java framework that supports the Java, Kotlin, and Groovy languages. It tries to improve over other popular frameworks, like Spring and Spring Boot, with a fast startup time, reduced memory footprint, and easy creation of unit tests.

The Micronaut Kubernetes project simplifies the integration between the two by offering the following facilities:

- It contains a Service Discovery module that allows Micronaut clients to discover Kubernetes services.

- The Configuration module can read Kubernetes’ ConfigMaps and Secrets instances and make them available as PropertySources in the Micronaut application. Then any bean can read the configuration values using @Value (or any other method). The Configuration module will monitor changes in the ConfigMaps, propagate them to the Environment, and refresh it. So, these changes will be available immediately in the application without a restart.

- The Configuration module also provides a KubernetesHealthIndicator that provides all kinds of information about the pod in which the application is running.

- Overall, the library makes it easy to deploy and manage Micronaut applications on a Kubernetes cluster.

Kubernetes for Legacy Java Application Servers

Java Application Servers are web servers that host Java EE applications. They provide Java EE specified services like security, transaction support, load balancing, and managing distributed systems.

Popular Java EE compliant application servers include Apache Tomcat, Red Hat JBoss and Wildfly, Oracle WebLogic, and IBM WebSphere. Businesses have been using them for years to host their legacy Java applications. Let’s see how you can use them with Kubernetes.

Using Apache Tomcat with Kubernetes

Here are the steps to install and configure Java Tomcat applications using Kubernetes:

1. Build the Tomcat Operator with the source code from Github.

2. Push the image to Docker.

3. Deploy the Operator image to a Red Hat OpenShift cluster.

4. Now deploy your application using the custom resources operator.

You can also deploy an existing WAR file to the Kubernetes cluster.

Using Red Hat OpenShift / JBoss EAP / Wildfly with Kubernetes

Red Hat OpenShift is a Kubernetes platform that offers automated operations and streamlined lifecycle management. It helps operations teams provision, manage, and scale Kubernetes platforms. The platform can bundle all required components like libraries and runtimes and ship them as one package.

To deploy an application in Kubernetes, you must first create an image with all the required components and containerize it. The JBoss EAP Source-to-Image (S2I) builder tool creates these images from JBoss EAP applications. Then use the JBoss EAP Operator to deploy the container to OpenShift.

The JBoss EAP Operator simplifies operations while deploying applications. You only need to specify the image and the number of instances to deploy. It supports critical enterprise functionality like transaction recovery and EJB (Enterprise Java Beans) remote calls.

Some benefits of migrating JBoss EAP apps to OpenShift include reduced operational costs, improved resource utilization, and a better developer experience. In addition, you get the Kubernetes advantages in maintaining, running, and scaling application workloads.

Thus, using OpenShift simplifies legacy application development and deployment.

Using Oracle WebLogic with Kubernetes

Oracle’s WebLogic server runs some of the most mission-critical Java EE applications worldwide.

You can deploy the WebLogic server in self-hosted Kubernetes clusters or on Oracle Cloud. This combination offers the advantages of automation and portability. You can also easily customize multiple domains. The Oracle WebLogic Server Kubernetes Operator simplifies creating and managing WebLogic Servers in Kubernetes clusters.

The operator enables you to package your WebLogic Server installation and application into portable images. This, along with the resource description files, allows you to deploy them to any Kubernetes cluster where you have the operator installed.

The operator supports CI/CD processes. It facilitates the integration of changes when deploying to different environments, like test and production.

The operator uses Kubernetes APIs to perform provisioning, application versioning, lifecycle management, security, patching, and scaling.

Using IBM WebSphere with Kubernetes

IBM WebSphere Application Server is a flexible and secure Java server for enterprise applications. It provides integrated management and administrative tools, centralized logging, monitoring, and many other features.

IBM Cloud Pak for Applications is a containerized software solution for modernizing legacy applications. The Pak comes bundled with WebSphere and Red Hat OpenShift. It enables you to run your legacy applications in containers and deploy and manage them with Kubernetes.

Related: The Best Java Monolith Migration Tools

Kubernetes with Legacy Java Databases

For orchestrators like Kubernetes, managing stateless applications is a breeze. However, they find it challenging to create and manage stateful applications with databases. Here is where Operators come in.

In most organizations, Database Administrators create database clusters in the cloud and secure and scale them. They watch out for patches and upgrades and apply them manually. They are also responsible for taking backups, handling failures, and monitoring load and efficiency.

All this is tedious and expensive. But Kubernetes Operators can perform these tasks automatically without human involvement.

Let us look at how they help with two popular database platforms, MySQL and MongoDB.

Using MySQL with Kubernetes

Oracle has released the open-source Kubernetes Operator for MySQL. It is a Kubernetes Controller that you install inside a Kubernetes cluster. The MySQL Operator uses Customer Resource Definitions to extend the Kubernetes API. It watches the API server for Customer Resource Definitions relating to MySQL and acts on them. The operator makes running MySQL inside Kubernetes easy by abstracting complexity and reducing operational overhead. It manages the complete lifecycle with automated setup, maintenance, upgrades, and backup.

Here are some tasks that the operator can automate:

- Create and scale a self-healing MySQL InnoDB cluster from a YAML file

- Backup a database and archive it in object storage

- List backups and fetch a particular backup

- Backup databases according to a defined schedule

If you are planning to deploy MySQL inside Kubernetes, the MySQL Operator can do the heavy lifting for you.

Using MongoDB with Kubernetes Operator

MongoDB is an open-source, general-purpose, NoSQL (non-relational) database manager. Its data model allows users to store unstructured data. The database comes bundled with a rich set of APIs.

MongoDB is very popular with developers. However, manually managing MongoDB databases is time-consuming and difficult.

MongoDB Enterprise Kubernetes Operator: MongoDB has released the MongoDB Enterprise Operator. The operator enables users to deploy and manage database clusters from within Kubernetes. You can specify the actions to be taken in a declarative configuration file. Here are some things you can do with MongoDB using the Enterprise Operator:

- Deploy and scale MongoDB clusters of any size

- Specify cluster configurations like security settings, resilience, and resource limits

- Enable centralized logging

Note that the Enterprise Operator performs all its activities using the OpsManager, the MongoDB management platform.

Running MongoDB using Kubernetes is much easier than doing it manually.

MongoDB comes in many flavors. The MongoDB Enterprise Operator supports the MongoDB Enterprise version, the Community Operator supports the Community version, and the Atlas Operator supports the cloud-based database-as-a-service Atlas version.

How do Kubernetes (and Docker) make a difference with legacy apps?

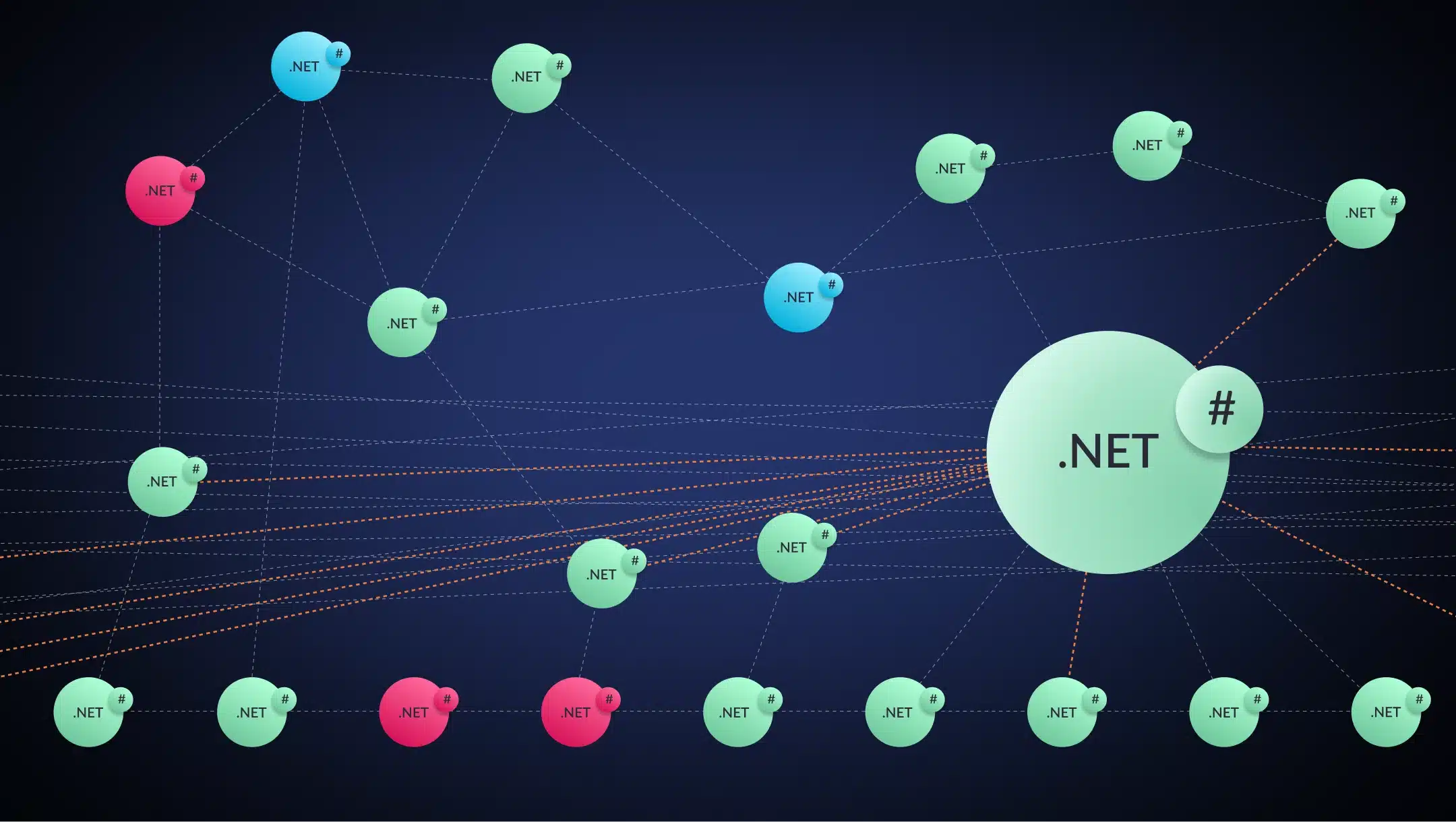

Using Kubernetes provides tactical modernization benefits but not strategic gains (re-hosting compared to refactoring).

When legacy Java applications use Kubernetes (or Kubernetes Operators) with Docker, they immediately get some benefits. To recap, they are:

Improved security: Container platforms have security capabilities and processes baked in. One example is the concept of least privilege. It is easy to add additional security tools. Containers provide data protection facilities, like encrypted communication between containers, that apps can use right away.

Simplified DevOps: Deploying legacy apps to production is error-prone because the team must individually deploy executables, libraries, configuration files, and other dependencies. Failing to deploy even one of these dependencies or deploying an incorrect version can lead to problems.

But when using containers, developers build an image that includes the code and all other required components. Then they deploy these images in containers. So, nothing is ever left out. With Kubernetes, container deployment and management are automated, simplifying the DevOps process.

This approach has some drawbacks. There is no code-level modernization, and there are no architectural changes. The original monolith stays intact. There is no reduction of technical debt – the code remains untouched. There are no architectural changes. There is limited scalability – the entire application (now inside a container) has to be scaled.

With modern applications, we can scale individual microservices. The drawbacks of working on a monolith created long ago with older technology versions, are unexpected linkages and poorly understood code. Hence, there is no increase in development velocity.

Using Kubernetes is a Start, but What If You Want to Go Further?

Enterprises use containers to build applications faster, deploy to hybrid cloud environments, and scale automatically and efficiently. Container platforms like Docker provide a host of benefits. These include increased ease and reliability of deployment and enhanced security. Using Kubernetes (directly or with Operators) with containers makes the process even better.

We have seen that using Kubernetes with unchanged legacy applications has many advantages. But these advantages are tactical.

Consider a more transformational form of application modernization to get the significant strategic advantages that will keep your business competitive. This would include breaking up legacy Java applications into microservices, creating CI/CD pipelines for deployment, and moving to the cloud. In short, it involves making your applications cloud-native.Carrying out such a full-scale modernization can be risky. There are many options to consider and many choices to make. It involves a lot of work. You’ll want some help with that.

vFunction has created a repeatable platform that can transform legacy applications to cloud-native, quickly, safely, and reliably. Request a demo to see how we can make your transformation happen.