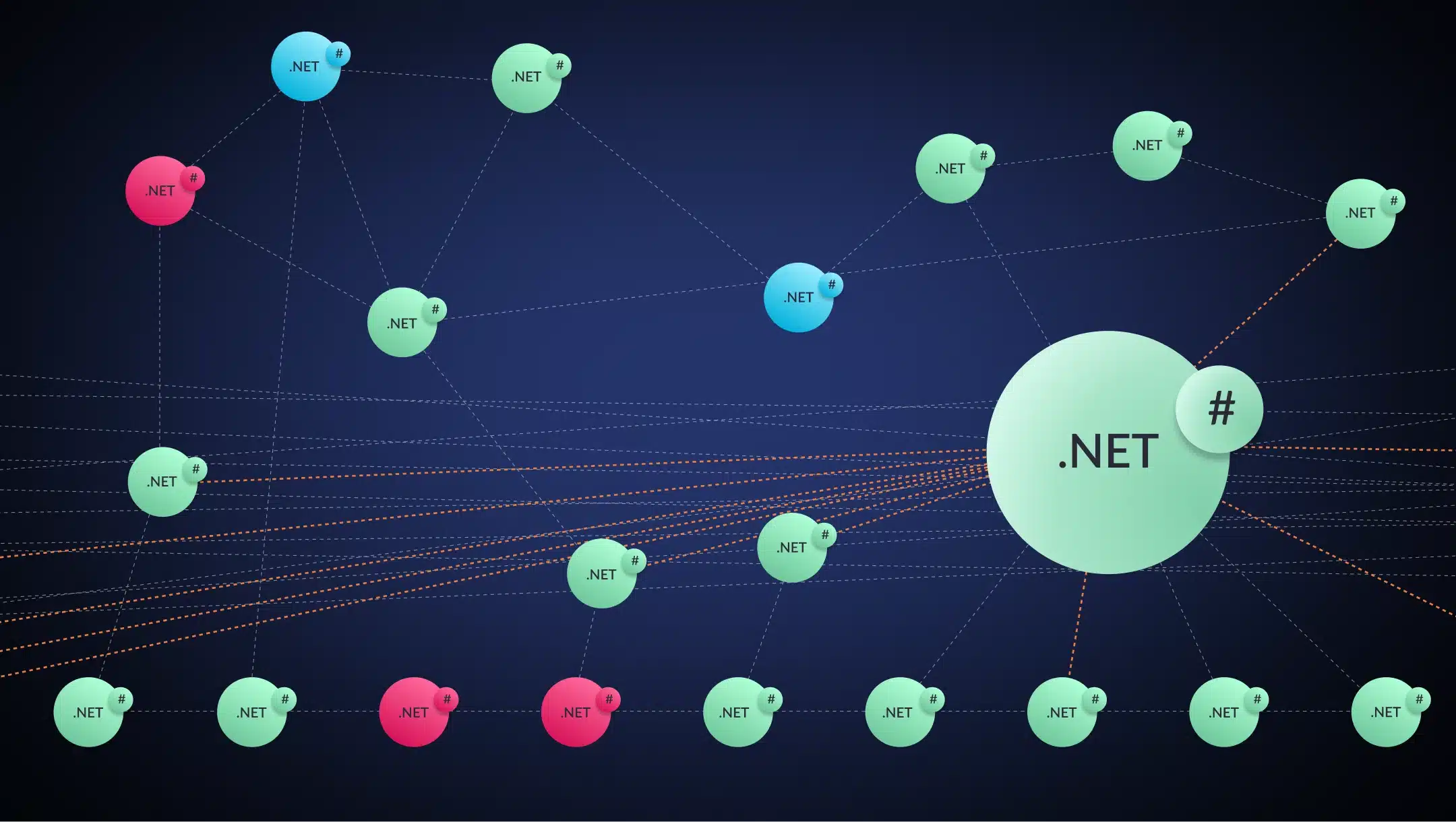

I'm very happy to introduce the two people that will be walking us through the subject today. We have Michael Chiaramonte coming to us from Long Island, New York. Michael is our principal architect here at vFunction. He works very closely with engineering teams to modularize their monolithic applications and turn them into cloud-ready services. We also have Sam Cole from vFunctions UK office who works with partners and customers around the world on modernizing complex applications and getting them ready for the cloud. We've kind of summarized on everybody's behalf here what the challenges LLM space when it comes to large scale modernization. And I think it's kind of generalized what we've seen across the board and what we've read and kind of intuited from our customers and the market as a general. Yeah, and so we're gonna cover these just to give you a summary right now. So lack of domain context. So understanding software architecture really, the runtime awareness. So we have applications really run-in real life. Is that the same as what it looks like in the source code? Fragmented global view, which we just covered actually. So we've just looked at that complex application. How big an application does it need to be for the LLM to start to struggle and not be able to see the full picture? Operational blind spots, obviously not understanding kind of real life examples. And then the first one we're gonna look at is prompt fragility and inconsistency. So how do we actually go about asking our code assistants to do this on our behalf? So let's start here. So prompt fragility and inconsistency, and I'm gonna start as a simplistic way. I'm gonna give you a prompt, Michael, modernize my application as my co assistant. I hope it responds with something like, okay, good luck. Because no, I mean, that prompt is obviously too vague and too short. And I think that's kind of the problem with the narrative that we're seeing out in the market by the AI companies themselves in some ways and by the proponents of AI. I mean, was that white paper that was put out recently about Anthropics saying that they use Claude to refactor all that COBOL code in a sample application that AWS has as part of their mainframe modernization platform. And while, you know, from a outsider's perspective, it looks really great because it's like, oh, COBOL, that thing that nobody uses anymore. And now we're going to Java, which is still widely used. It looks really enticing, but the thing that we're missing is a lot of context around why this is a problem. Context is gonna become like a reoccurring theme here, right? Because for many years, even without AI translation of source code from one language to another has been solved in some ways. We've got tools that have parsed languages and turned them into other languages. And while it's cool that the AI was able to do that and replicate the inputs, taking the same input and generating the same output, so much so that it caused IBM stock to drop quite significantly, unfortunately, it's completely missing the larger context of like, does mainframe still matter today? Right? And it's that larger architectural view of the way mainframe processes transactions and how the system is built and how you would have to turn those COBOL applications into something completely different than what they are today. That kind of makes the translation of COBOL to Java trivial, right? Like that's not the real problem. It's a problem and it's certainly one that needs to be solved if you don't have COBOL programmers, but it doesn't really fully solve the bigger issue. And again, lack of context, it really is hurting that situation. I thought it was gonna be easy to use a code assistant, just give it simple prompt and just go ahead on my behalf, but yeah, I need to have some even bit more information. So that leads us nicely on to domain context. So what's all software architecture all about? So this is an image from V function for anybody who's not seen it, but this is representing the software architecture for an order management application. Yeah, we're using this just because it's kind of easy to visualize, but if you go back to maybe your CS101 days or maybe later on, because systems architecture is a little bit more complicated than just writing code. When you're building a larger system, you're thinking about it in terms of the capabilities that it has, right? You're thinking about like, in this case, the order management system, it's gonna do shipping and order handling and processing credit transactions and things like that, right? And so when you're building an application, you have this mental model of where the code all fits together. And it may be initially the code that you've built kind of actually represents that, right? But as time goes on, the application becomes more and more complicated as people are using it and asking for new features and those boundaries break down. And so what's nice about this architecture that we're looking at that there's very little sort of cross communication. Like we have that static view, it was a big mess of lines, or as our CTO likes to say a big mesh, right? You know, it's like this whole thing that is all interconnected. These are domain boundaries. These are functional areas of the code that are intercommunicating, but it's not too complicated, right? There's only a few things that are connecting between orders and fulfillment and payments. So it's a very straightforward, well contained application. This is more complicated, right? This is a more complicated application. It's got about four thousand three hundred classes. Again, not the largest application we've looked at by any means. But you can see there's like that hub and spoke design on the right hand side where you have, oh, we're in Zoom so I can draw. So we've got this kind of nice hub and spoke design here. You've got the central hub and the lines radiating out, which are really great because that means that those components are kind of on their own, doing their own thing. And then you have a little bit more complexity here in this section where you have the functional domains that are well defined, but they're communicating with one another, doing things that involve the other domains. But again, it's not as crazy as that other diagram that we looked at where it was just everything everywhere all at once. So what would, let's maybe look at some bad architecture in the source code, if you could show that Michael. So, I mean, that's the thing, right? Like everybody wants it to be something that can be looked at in the source code. And the problem with that is that the source code doesn't really tell the architectural picture. There's a lot of factors there. I mean, and even like the prompt that you had before, modernize my application, right? Like what does modernize mean to you? Are you looking for framework upgrades? Are you looking for technology upgrades, language upgrades? The whole thing needs to be re architected because it's a giant monolith and you wanna turn it into microservices, right? So there's a lot of questions that are unanswered by just a big prompt like that. And similarly with source code, the best you can do is kind of interpret the intent of the developers. But like I said, as you go through over time, that intent becomes less and less clear as developers try to get new functionality in as quickly as possible. And this isn't something that is like a statement of blame or anything. That's the reality of building large complex systems is that you have the need to add new features that didn't necessarily fit into your worldview when you built the system before. And that lack of context and understanding in the code leads to it being very difficult or if almost impossible to understand the true architectural intent of the application by just looking at the code. Sure, you can look at good coding practices and are you doing something that's inherently insecure, like using HTTP instead of HTTPS or doing something completely bonkers, like including API keys in your source code that shouldn't be there, right? Those things, coding assistants do actually really well, but the other more difficult architectural stuff is really difficult for them. Yeah, so they can't see the structure. So that's obviously a bit of a limitation when you're to modernize something if you can't see how it works. But you made another good point there, Michael. So I've got a nice section around one time awareness. So applications in a running state is obviously kind of a little bit different to applications at the source code. So I've a screen here, again from V function. I'll just play this as a demo. V function does some dynamic analysis of an application to see and understand it in its running state. Key point here is just showing that we're dealing with real runtime systems here, right? And circular dependency and there's this concept we have of exclusive and non exclusive classes. All it means is that you've built a business domain that is shared by other business, has functionality that's shared by other business domains. So there's cross cutting capabilities that shouldn't be shared. And then we also track things like data access, right? Because ultimately certain tables should be owned by certain capabilities within the application. We see that a lot of times that that's not the case. And that's like a second layer of refactoring that needs to be done. And sometimes that stuff is really difficult for even developers to see when looking at the code because of multiple layers of abstraction through things like class inheritance or dependency injection, right? All these systems that are meant to simplify development because the coder can focus on what it is they're trying to implement feature wise, but kind of hides a lot of the details under the cover through abstraction layers and libraries and things like that, that we actually can see at runtime because they get exposed through profiling the call stack, right? It becomes a more deep dive view into what's actually happening in the application, which is extremely important to understand. So how would people go about modernizing this application then? So now that we can see that logical construct, we can see and understand runtime complications, how do we modernize this one? Right, so, I mean, for this, it's really down to the team understanding what their goal for modernization is, right? And we have this ability to generate these to dos, right? You're building out With any AI assistant, no matter what kind of refactoring you're doing, you need to work with it just like you would with a human, right? And you're building out sets of tasks and documentation, right? And the reason that you're doing that is because you're building up the context for these AI agents to understand what it is you're trying to do. So Sam, what you're showing here is that like in the to do that we have as a task for modernizing this Modernization plan, really. Yeah, Yeah. We can generate prompts, help you understand, help you with those agents, right? But more so this is really just meant to help you see, this is a six page prompt that we've generated based on the context. And this seems like a lot, but if you think about how much information you have to give on a large application for a coding assistant to know what you want, like it's gonna be six pages. Or if it's not gonna be six pages, it's gonna be ten, twenty, thirty, forty prompts back and forth, kind of doing that, you know, like Claude and Codex have that planning mode for their agents now where you can put together a modernization plan. The same thing, except now we're helping you reduce the number of tokens by building up that context automatically through the analysis of the application. It's gonna take me all day to write six pages. Yeah. So let's kind of put this all together and see what this looks like. So we're gonna do a bit of a demo using Kiro, which is AWS's IDE and built in code assistant and showing you how that takes the context from V function. So the software architecture, the runtime analysis, the prompt generation that V function produces and how we put that into practice in a real life example. So here we are in Quiro. So it's a developer environment, obviously with the coding system built in, it's got source code, I mean, it's got understanding of the application, but it's fully integrated with V function via powers or super powers. So as you can see here, I've just asked it to get the to dos from V function, that modernization plan we were looking at and Michael's talking us through. So these are all the tasks that we need to solve to modernize this application, everything from modularity to compatibility, to technical debt there. Obviously we want to get that prompt. We want to get the code system to interpret that for us. So what is V function solution to one of those issues here? It's going to get that prompt from vFunction, that remediation prompt by activating the power there, interpret what that means at the source code, which is doing right now. What are the changes giving me a perspective of what this is like? It seems to like the solution. So it's gonna do logical boundaries for us. So I just go ahead and get Kiro to fix it. So I want Kiro to go and do that, make those changes that we've supervised there nice and safely. And this is like a simplified version of what that power user customer we have is doing where they've actually used our tool plus coding assistance in Curo, like agents in Curo to generate tons of documentation about the application and the business logic and the domains that they're looking for. And then through that, set up a bunch of agents to allow them to then safely modernize. And of course, with providing all of that additional context through the documentation and the information that we're providing, they're able to move forward in a much more efficient and directed way because they've got all this information for the agent to consume to understand the intent rather than starting from a greenfield and doing what Sam said before, just modernize my application, which doesn't work, especially in the size of application they're in. They're at like thirteen thousand Java classes and an application that's been under development for about, I think almost twenty years. So it's a big meaty, difficult application to analyze and get a sense of what's going on in it. That was quite straightforward. Once you have the right prompts, the right context, the right understanding, and the code assistant has done its job. Let's just say we repackaged this up, redeployed. The key thing now is we need to check. We need to see that that has made improvements. We haven't caused any more challenges to the software architecture and we have this feedback loop. So in V function, if you look at the first application, the audit management system, this is after we've done the modernization process, we've tackled some of the to dos, the visualization has gone green. It shows me I've got some good modularity. We've removed it. And so dependencies, we've corrected the technical debt, we've upgraded frameworks and we've done all of the tasks we needed to do, but the feedback loop is important. We need to make sure that it was successful. It's kind of that the eyes above as it were just to put that validation in place. So V function need only marks to do is resolved or done green in this case, when the problem no longer exists in the software architecture. And that's important really, because otherwise potentially we're doing more damage than good if we can't logically see what's happening, adding dependencies left, right and center, because it seemed quite simple to do, maybe not the right thing there. Yeah. And that feedback loop, that concept of iteration, Sam, is another theme that we see with these AI assistants, right? So context and a feedback loop where providing enough information to make sure that the intent is clearly understood, and then having tools to overlook and see what's going on and continuously reanalyze and reevaluate to make sure that what was intended was actually carried forward as expected. And it's really no different than when you think about again, like when you're working with your colleagues, if somebody is developing a new feature, you wanna check to make sure that that feature is actually what was requested, because obviously people will not be happy if you say you completed a feature and it's not done in the way that was expected. So, you know, I think the challenge is people think that AI are magic and they're not. It's really, even if you think about how AI works, you read any of the papers about how AI actually works under the cover, there's this larger concept that reinforces that the more you walk AI through the process of understanding the problem, just like with the person, the more likely you are to actually get a result that's valid and what you're looking for, because it's a probabilistic engine and you wanna increase your probabilities to the highest point of certainty and success. Yeah, I mean, it allows us to do incremental modernization as well, which is important. Tackle a few tasks alongside active development and business requirements and validate that we're not causing any damage. So putting this all together, this is the approach actually that vFunction takes. So the vFunction software to providing that architectural context to the codec system, but we're relying on the codec system to do the heavy lift for us, which is the most efficient thing to do here. So actually in V function, some of the visualizations you've seen are show today and we're collecting runtime data. So that context of an application in its running state, as well as the existing structure of the application and putting that together through using machine learning data science to logically separate out pretty much the business logic into those domains for us and see how entangled and complex it is. That's giving us that architecture view, but allowing an architect to define a baseline, a target architecture, if you like, that we're gonna adhere to as part of that modernization journey, It's going to produce all of the prompts to get us to that target architecture in an iterative way, but also that feedback loop and validate that those things are happening. So that's how the V function process works, but kind of altogether in a transformation journey, appears we need to do the analysis, that learning we talked about understanding the application in its first instance, suggesting what the software architecture should be. So just like we saw at the very beginning, what are the domains, the dependencies, it's going to suggest that for us, allow us to align that with business goals to create our target architecture, come up with that modernization plan, Stage three here, it's going to come up with a plan of the to dos, the prompts to modularize logically separate out things, make sure that the database is all still working and we're not breaking anything there. Correct any technical debt, old libraries and things that need upgrading dead code, make sure it's compatible with the cloud services that we want to deploy it into and correct any business logic that won't do. Send those prompts to our code assistant. In my demo was Kiro. So have Kiro do all that work for us. Feedback loop to repeat one, two, and three until we've modularized the business logic. And then we can actually use V function technology to extract out a set of business logic context to domain into a separate running service. So we expose a tool back to the coder system to do that called co copy. That's gonna pull out from the existing code base what we need, repackage that up as new software projects, rest API and enable it for us, or even create open API MCP interfaces for us. If we want to expose that business logic to other agentic AI technologies for us. And then once we have smaller components rather than that big beast we saw at the very beginning, we can really easily use transformation tools like AWS transform to upgrade the versions by dot net framework application to dot net core, for example, so it could be open source. It should be a seamless lift once we have a small defined set of business logic there. Yeah, Sam, I'm gonna stop you for a second because we've got some questions coming in. Rahul, you asked about how it compares to the Cloud Code, doing a one time access of the source. So I know you said you joined late, but to kind of recap what we said, the problem is that when you're just looking at the source code, there's a lot of context that's missing about the way that the code interacts, which is important to understand the architectural intent of the application. Because a lot of times with larger applications, the architecture is not clear in the code base any longer. And so what you need to do with V function or any other modernization that you're gonna be doing in your work, is you have to be able to very clearly provide context about the architectural intent of the application, how you want the code to be divided up into specific business services within the monolith in order to help these agents understand then how you're looking refactor. You know, you can do that through many, many, many prompts and discussions and gathering information manually. But what developed with vFunction is that by doing a dynamic analysis of the runtime of the application, the boundaries of these capabilities become much more clear and the interdependencies. And through that, then we can provide better context, or at least more complete context to these AI assistants, so that they can more quickly and more efficiently fix the architectural issues to get you closer to the target architecture that you're looking to accomplish. Whether that's just to improve modularity in application code base, or to migrate to a series of containers and serverless deployment, you know, applications. So hopefully that answers your question. Shatanik, thanks for fielding that in the background chat as well. And then Nilesh, you asked what is our focus? So yeah, so migrate, you asked if it's migration or modernization, re architecting, it's actually all of those things. And I'm not saying that to be like, oh yes, we do it all. Because the reality is that there are kind of different facets of the same challenge that people are facing, right? If you're looking to migrate, nobody really wants to do a lift and shift, right? The reality, like the reality is that that's what happens a lot of times, but Sam, we were talking, right? Containerization, Lambdas, like serverless. Remember the AWS provides a really nice service for GraphQL migration and hooking up things through GraphQL that I can't think of the name of right now. Yeah, as Michael said, really the function is providing context around software application architecture. So a code assistant to do whatever we need to do, whether that's understand whether an application is too complex or what its dependencies are for migration, whether that's to go a step further into modernization is the application suitable and appropriate now, well structured, that can just be containerized? Do we need to do some refactoring, re architecting to get the application into separate runnable components, more modular can run independently, more scalable. Exactly. All the way up the stack there, or do I just want to make changes to my software on a day to day basis? So as part of incremental modernization alongside active development, we'll just, improving things. Don't want to deteriorate the software architecture by providing that context without it as we've demonstrated. And we really, you can't use a coded system without providing a lot of context and that's not how developers are used to doing things. Yeah, yep. So real quick, I was thinking of AWS AppSync, but the other thing that you were talking about Sam, is really if you think about what the process of trying to do a migration or rearchitecting of your application, there is going to need to be modernization, right? And as part of that modernization, you might decide, oh, we need to rearchitect, right? So it's like, that's why I say we do it all because we're focused on the tasks that are needed to do those things. Where you wanna take it is up to you and what your team is doing. But they're all part of the same sort of generalized goal bringing an application closer to what you want it to be in the architectural perspective. So I want to just leave everybody with a thought and hopefully it's a good lessons learned from today's session. AI needs architecture to reason about applications. And that's hopefully the narrative that we've explained today and why it can't see that by itself. Once you give it that context, the whole world changes really. Modernization workflow changes, the ability to do things rapidly at scale with accuracy using code assistance changes. So that's what I want to leave everybody with something to think about. Are we providing the right context today to our code assistance and AI? And what do we need to do to correct that to make them obviously accurate, efficient modernization tools?

I'm very happy to introduce the two people that will be walking us through the subject today. We have Michael Chiaramonte coming to us from Long Island, New York. Michael is our principal architect here at vFunction. He works very closely with engineering teams to modularize their monolithic applications and turn them into cloud-ready services. We also have Sam Cole from vFunctions UK office who works with partners and customers around the world on modernizing complex applications and getting them ready for the cloud. We've kind of summarized on everybody's behalf here what the challenges LLM space when it comes to large scale modernization. And I think it's kind of generalized what we've seen across the board and what we've read and kind of intuited from our customers and the market as a general. Yeah, and so we're gonna cover these just to give you a summary right now. So lack of domain context. So understanding software architecture really, the runtime awareness. So we have applications really run-in real life. Is that the same as what it looks like in the source code? Fragmented global view, which we just covered actually. So we've just looked at that complex application. How big an application does it need to be for the LLM to start to struggle and not be able to see the full picture? Operational blind spots, obviously not understanding kind of real life examples. And then the first one we're gonna look at is prompt fragility and inconsistency. So how do we actually go about asking our code assistants to do this on our behalf? So let's start here. So prompt fragility and inconsistency, and I'm gonna start as a simplistic way. I'm gonna give you a prompt, Michael, modernize my application as my co assistant. I hope it responds with something like, okay, good luck. Because no, I mean, that prompt is obviously too vague and too short. And I think that's kind of the problem with the narrative that we're seeing out in the market by the AI companies themselves in some ways and by the proponents of AI. I mean, was that white paper that was put out recently about Anthropics saying that they use Claude to refactor all that COBOL code in a sample application that AWS has as part of their mainframe modernization platform. And while, you know, from a outsider's perspective, it looks really great because it's like, oh, COBOL, that thing that nobody uses anymore. And now we're going to Java, which is still widely used. It looks really enticing, but the thing that we're missing is a lot of context around why this is a problem. Context is gonna become like a reoccurring theme here, right? Because for many years, even without AI translation of source code from one language to another has been solved in some ways. We've got tools that have parsed languages and turned them into other languages. And while it's cool that the AI was able to do that and replicate the inputs, taking the same input and generating the same output, so much so that it caused IBM stock to drop quite significantly, unfortunately, it's completely missing the larger context of like, does mainframe still matter today? Right? And it's that larger architectural view of the way mainframe processes transactions and how the system is built and how you would have to turn those COBOL applications into something completely different than what they are today. That kind of makes the translation of COBOL to Java trivial, right? Like that's not the real problem. It's a problem and it's certainly one that needs to be solved if you don't have COBOL programmers, but it doesn't really fully solve the bigger issue. And again, lack of context, it really is hurting that situation. I thought it was gonna be easy to use a code assistant, just give it simple prompt and just go ahead on my behalf, but yeah, I need to have some even bit more information. So that leads us nicely on to domain context. So what's all software architecture all about? So this is an image from V function for anybody who's not seen it, but this is representing the software architecture for an order management application. Yeah, we're using this just because it's kind of easy to visualize, but if you go back to maybe your CS101 days or maybe later on, because systems architecture is a little bit more complicated than just writing code. When you're building a larger system, you're thinking about it in terms of the capabilities that it has, right? You're thinking about like, in this case, the order management system, it's gonna do shipping and order handling and processing credit transactions and things like that, right? And so when you're building an application, you have this mental model of where the code all fits together. And it may be initially the code that you've built kind of actually represents that, right? But as time goes on, the application becomes more and more complicated as people are using it and asking for new features and those boundaries break down. And so what's nice about this architecture that we're looking at that there's very little sort of cross communication. Like we have that static view, it was a big mess of lines, or as our CTO likes to say a big mesh, right? You know, it's like this whole thing that is all interconnected. These are domain boundaries. These are functional areas of the code that are intercommunicating, but it's not too complicated, right? There's only a few things that are connecting between orders and fulfillment and payments. So it's a very straightforward, well contained application. This is more complicated, right? This is a more complicated application. It's got about four thousand three hundred classes. Again, not the largest application we've looked at by any means. But you can see there's like that hub and spoke design on the right hand side where you have, oh, we're in Zoom so I can draw. So we've got this kind of nice hub and spoke design here. You've got the central hub and the lines radiating out, which are really great because that means that those components are kind of on their own, doing their own thing. And then you have a little bit more complexity here in this section where you have the functional domains that are well defined, but they're communicating with one another, doing things that involve the other domains. But again, it's not as crazy as that other diagram that we looked at where it was just everything everywhere all at once. So what would, let's maybe look at some bad architecture in the source code, if you could show that Michael. So, I mean, that's the thing, right? Like everybody wants it to be something that can be looked at in the source code. And the problem with that is that the source code doesn't really tell the architectural picture. There's a lot of factors there. I mean, and even like the prompt that you had before, modernize my application, right? Like what does modernize mean to you? Are you looking for framework upgrades? Are you looking for technology upgrades, language upgrades? The whole thing needs to be re architected because it's a giant monolith and you wanna turn it into microservices, right? So there's a lot of questions that are unanswered by just a big prompt like that. And similarly with source code, the best you can do is kind of interpret the intent of the developers. But like I said, as you go through over time, that intent becomes less and less clear as developers try to get new functionality in as quickly as possible. And this isn't something that is like a statement of blame or anything. That's the reality of building large complex systems is that you have the need to add new features that didn't necessarily fit into your worldview when you built the system before. And that lack of context and understanding in the code leads to it being very difficult or if almost impossible to understand the true architectural intent of the application by just looking at the code. Sure, you can look at good coding practices and are you doing something that's inherently insecure, like using HTTP instead of HTTPS or doing something completely bonkers, like including API keys in your source code that shouldn't be there, right? Those things, coding assistants do actually really well, but the other more difficult architectural stuff is really difficult for them. Yeah, so they can't see the structure. So that's obviously a bit of a limitation when you're to modernize something if you can't see how it works. But you made another good point there, Michael. So I've got a nice section around one time awareness. So applications in a running state is obviously kind of a little bit different to applications at the source code. So I've a screen here, again from V function. I'll just play this as a demo. V function does some dynamic analysis of an application to see and understand it in its running state. Key point here is just showing that we're dealing with real runtime systems here, right? And circular dependency and there's this concept we have of exclusive and non exclusive classes. All it means is that you've built a business domain that is shared by other business, has functionality that's shared by other business domains. So there's cross cutting capabilities that shouldn't be shared. And then we also track things like data access, right? Because ultimately certain tables should be owned by certain capabilities within the application. We see that a lot of times that that's not the case. And that's like a second layer of refactoring that needs to be done. And sometimes that stuff is really difficult for even developers to see when looking at the code because of multiple layers of abstraction through things like class inheritance or dependency injection, right? All these systems that are meant to simplify development because the coder can focus on what it is they're trying to implement feature wise, but kind of hides a lot of the details under the cover through abstraction layers and libraries and things like that, that we actually can see at runtime because they get exposed through profiling the call stack, right? It becomes a more deep dive view into what's actually happening in the application, which is extremely important to understand. So how would people go about modernizing this application then? So now that we can see that logical construct, we can see and understand runtime complications, how do we modernize this one? Right, so, I mean, for this, it's really down to the team understanding what their goal for modernization is, right? And we have this ability to generate these to dos, right? You're building out With any AI assistant, no matter what kind of refactoring you're doing, you need to work with it just like you would with a human, right? And you're building out sets of tasks and documentation, right? And the reason that you're doing that is because you're building up the context for these AI agents to understand what it is you're trying to do. So Sam, what you're showing here is that like in the to do that we have as a task for modernizing this Modernization plan, really. Yeah, Yeah. We can generate prompts, help you understand, help you with those agents, right? But more so this is really just meant to help you see, this is a six page prompt that we've generated based on the context. And this seems like a lot, but if you think about how much information you have to give on a large application for a coding assistant to know what you want, like it's gonna be six pages. Or if it's not gonna be six pages, it's gonna be ten, twenty, thirty, forty prompts back and forth, kind of doing that, you know, like Claude and Codex have that planning mode for their agents now where you can put together a modernization plan. The same thing, except now we're helping you reduce the number of tokens by building up that context automatically through the analysis of the application. It's gonna take me all day to write six pages. Yeah. So let's kind of put this all together and see what this looks like. So we're gonna do a bit of a demo using Kiro, which is AWS's IDE and built in code assistant and showing you how that takes the context from V function. So the software architecture, the runtime analysis, the prompt generation that V function produces and how we put that into practice in a real life example. So here we are in Quiro. So it's a developer environment, obviously with the coding system built in, it's got source code, I mean, it's got understanding of the application, but it's fully integrated with V function via powers or super powers. So as you can see here, I've just asked it to get the to dos from V function, that modernization plan we were looking at and Michael's talking us through. So these are all the tasks that we need to solve to modernize this application, everything from modularity to compatibility, to technical debt there. Obviously we want to get that prompt. We want to get the code system to interpret that for us. So what is V function solution to one of those issues here? It's going to get that prompt from vFunction, that remediation prompt by activating the power there, interpret what that means at the source code, which is doing right now. What are the changes giving me a perspective of what this is like? It seems to like the solution. So it's gonna do logical boundaries for us. So I just go ahead and get Kiro to fix it. So I want Kiro to go and do that, make those changes that we've supervised there nice and safely. And this is like a simplified version of what that power user customer we have is doing where they've actually used our tool plus coding assistance in Curo, like agents in Curo to generate tons of documentation about the application and the business logic and the domains that they're looking for. And then through that, set up a bunch of agents to allow them to then safely modernize. And of course, with providing all of that additional context through the documentation and the information that we're providing, they're able to move forward in a much more efficient and directed way because they've got all this information for the agent to consume to understand the intent rather than starting from a greenfield and doing what Sam said before, just modernize my application, which doesn't work, especially in the size of application they're in. They're at like thirteen thousand Java classes and an application that's been under development for about, I think almost twenty years. So it's a big meaty, difficult application to analyze and get a sense of what's going on in it. That was quite straightforward. Once you have the right prompts, the right context, the right understanding, and the code assistant has done its job. Let's just say we repackaged this up, redeployed. The key thing now is we need to check. We need to see that that has made improvements. We haven't caused any more challenges to the software architecture and we have this feedback loop. So in V function, if you look at the first application, the audit management system, this is after we've done the modernization process, we've tackled some of the to dos, the visualization has gone green. It shows me I've got some good modularity. We've removed it. And so dependencies, we've corrected the technical debt, we've upgraded frameworks and we've done all of the tasks we needed to do, but the feedback loop is important. We need to make sure that it was successful. It's kind of that the eyes above as it were just to put that validation in place. So V function need only marks to do is resolved or done green in this case, when the problem no longer exists in the software architecture. And that's important really, because otherwise potentially we're doing more damage than good if we can't logically see what's happening, adding dependencies left, right and center, because it seemed quite simple to do, maybe not the right thing there. Yeah. And that feedback loop, that concept of iteration, Sam, is another theme that we see with these AI assistants, right? So context and a feedback loop where providing enough information to make sure that the intent is clearly understood, and then having tools to overlook and see what's going on and continuously reanalyze and reevaluate to make sure that what was intended was actually carried forward as expected. And it's really no different than when you think about again, like when you're working with your colleagues, if somebody is developing a new feature, you wanna check to make sure that that feature is actually what was requested, because obviously people will not be happy if you say you completed a feature and it's not done in the way that was expected. So, you know, I think the challenge is people think that AI are magic and they're not. It's really, even if you think about how AI works, you read any of the papers about how AI actually works under the cover, there's this larger concept that reinforces that the more you walk AI through the process of understanding the problem, just like with the person, the more likely you are to actually get a result that's valid and what you're looking for, because it's a probabilistic engine and you wanna increase your probabilities to the highest point of certainty and success. Yeah, I mean, it allows us to do incremental modernization as well, which is important. Tackle a few tasks alongside active development and business requirements and validate that we're not causing any damage. So putting this all together, this is the approach actually that vFunction takes. So the vFunction software to providing that architectural context to the codec system, but we're relying on the codec system to do the heavy lift for us, which is the most efficient thing to do here. So actually in V function, some of the visualizations you've seen are show today and we're collecting runtime data. So that context of an application in its running state, as well as the existing structure of the application and putting that together through using machine learning data science to logically separate out pretty much the business logic into those domains for us and see how entangled and complex it is. That's giving us that architecture view, but allowing an architect to define a baseline, a target architecture, if you like, that we're gonna adhere to as part of that modernization journey, It's going to produce all of the prompts to get us to that target architecture in an iterative way, but also that feedback loop and validate that those things are happening. So that's how the V function process works, but kind of altogether in a transformation journey, appears we need to do the analysis, that learning we talked about understanding the application in its first instance, suggesting what the software architecture should be. So just like we saw at the very beginning, what are the domains, the dependencies, it's going to suggest that for us, allow us to align that with business goals to create our target architecture, come up with that modernization plan, Stage three here, it's going to come up with a plan of the to dos, the prompts to modularize logically separate out things, make sure that the database is all still working and we're not breaking anything there. Correct any technical debt, old libraries and things that need upgrading dead code, make sure it's compatible with the cloud services that we want to deploy it into and correct any business logic that won't do. Send those prompts to our code assistant. In my demo was Kiro. So have Kiro do all that work for us. Feedback loop to repeat one, two, and three until we've modularized the business logic. And then we can actually use V function technology to extract out a set of business logic context to domain into a separate running service. So we expose a tool back to the coder system to do that called co copy. That's gonna pull out from the existing code base what we need, repackage that up as new software projects, rest API and enable it for us, or even create open API MCP interfaces for us. If we want to expose that business logic to other agentic AI technologies for us. And then once we have smaller components rather than that big beast we saw at the very beginning, we can really easily use transformation tools like AWS transform to upgrade the versions by dot net framework application to dot net core, for example, so it could be open source. It should be a seamless lift once we have a small defined set of business logic there. Yeah, Sam, I'm gonna stop you for a second because we've got some questions coming in. Rahul, you asked about how it compares to the Cloud Code, doing a one time access of the source. So I know you said you joined late, but to kind of recap what we said, the problem is that when you're just looking at the source code, there's a lot of context that's missing about the way that the code interacts, which is important to understand the architectural intent of the application. Because a lot of times with larger applications, the architecture is not clear in the code base any longer. And so what you need to do with V function or any other modernization that you're gonna be doing in your work, is you have to be able to very clearly provide context about the architectural intent of the application, how you want the code to be divided up into specific business services within the monolith in order to help these agents understand then how you're looking refactor. You know, you can do that through many, many, many prompts and discussions and gathering information manually. But what developed with vFunction is that by doing a dynamic analysis of the runtime of the application, the boundaries of these capabilities become much more clear and the interdependencies. And through that, then we can provide better context, or at least more complete context to these AI assistants, so that they can more quickly and more efficiently fix the architectural issues to get you closer to the target architecture that you're looking to accomplish. Whether that's just to improve modularity in application code base, or to migrate to a series of containers and serverless deployment, you know, applications. So hopefully that answers your question. Shatanik, thanks for fielding that in the background chat as well. And then Nilesh, you asked what is our focus? So yeah, so migrate, you asked if it's migration or modernization, re architecting, it's actually all of those things. And I'm not saying that to be like, oh yes, we do it all. Because the reality is that there are kind of different facets of the same challenge that people are facing, right? If you're looking to migrate, nobody really wants to do a lift and shift, right? The reality, like the reality is that that's what happens a lot of times, but Sam, we were talking, right? Containerization, Lambdas, like serverless. Remember the AWS provides a really nice service for GraphQL migration and hooking up things through GraphQL that I can't think of the name of right now. Yeah, as Michael said, really the function is providing context around software application architecture. So a code assistant to do whatever we need to do, whether that's understand whether an application is too complex or what its dependencies are for migration, whether that's to go a step further into modernization is the application suitable and appropriate now, well structured, that can just be containerized? Do we need to do some refactoring, re architecting to get the application into separate runnable components, more modular can run independently, more scalable. Exactly. All the way up the stack there, or do I just want to make changes to my software on a day to day basis? So as part of incremental modernization alongside active development, we'll just, improving things. Don't want to deteriorate the software architecture by providing that context without it as we've demonstrated. And we really, you can't use a coded system without providing a lot of context and that's not how developers are used to doing things. Yeah, yep. So real quick, I was thinking of AWS AppSync, but the other thing that you were talking about Sam, is really if you think about what the process of trying to do a migration or rearchitecting of your application, there is going to need to be modernization, right? And as part of that modernization, you might decide, oh, we need to rearchitect, right? So it's like, that's why I say we do it all because we're focused on the tasks that are needed to do those things. Where you wanna take it is up to you and what your team is doing. But they're all part of the same sort of generalized goal bringing an application closer to what you want it to be in the architectural perspective. So I want to just leave everybody with a thought and hopefully it's a good lessons learned from today's session. AI needs architecture to reason about applications. And that's hopefully the narrative that we've explained today and why it can't see that by itself. Once you give it that context, the whole world changes really. Modernization workflow changes, the ability to do things rapidly at scale with accuracy using code assistance changes. So that's what I want to leave everybody with something to think about. Are we providing the right context today to our code assistance and AI? And what do we need to do to correct that to make them obviously accurate, efficient modernization tools?

In this webinar, vFunction shows how architectural context turns coding assistants into true refactoring partners.

The session explores why AI tools struggle with large brownfield applications where domain boundaries are unclear, dependencies are unknown, and architectural drift has accumulated over time.

We then demonstrate how architectural context—derived from runtime and static analysis, combined with data science—reveals the system’s true structure. With visibility into domains, dependencies, and runtime call flows, vFunction provides AI assistants with structured guidance to safely identify structural issues, reduce architectural technical debt, and support incremental modernization.

In this session, you’ll learn:

- Why AI coding assistants struggle with large, complex enterprise systems

- How runtime call flows and dependency analysis reveal true domain boundaries

- How architectural context helps AI assistants safely refactor monoliths and guide service extraction

Instead of risky rewrites, architectural context allows code assistants to act as true refactoring partners, helping teams safely modularize and modernize complex systems.

👉 Ideal for software architects, engineering leaders, and developers modernizing large enterprise applications.

Watch time: 26 minutes