Every software development project has three constraints—time, resources, and quality. Knowing how to balance them is at the core of delivering consistent success. A well-balanced project resembles an equilateral triangle where the same stability is available no matter which side forms the base.

Over time, even the most balanced software loses stability. New features are added, and old functionality is disabled. Developers come and go, reducing team continuity. Eventually, the equilateral triangle looks more like an isosceles with a significant amount of technical debt to manage. That’s when refactoring projects often enter the development process.

What is a Refactoring Project?

Refactoring enables software teams to re-architect applications and restructure code without altering its external behavior. It may involve replacing old components with newer solutions or using new tools or languages that improve performance. These projects make code easier to maintain by eliminating dead or duplicate code and complex dependencies.

Incorporating refactoring into the development process can also extend the life of an application, allowing it to live in different environments, such as the cloud. However, refactoring doesn’t always reshape code to a well-balanced equilateral triangle. Plenty of pitfalls exist that can derail a project, despite refactoring best practices. Let’s look at seven mistakes that can impact the outcome of an application refactoring project.

Mistake #1: Starting with the Database or User Interface

When modernizing a monolith, there are three tiers you can focus on: the user interface, the business logic, or the data layer. There’s a temptation to go for the easy wins and start with the user interface, but in the end, you may have a shinier user interface, but you are facing the same issues that triggered the modernization initiative in the first place: exploding technical debt, decreasing engineering velocity, rising infrastructure and licensing costs, and unmet business expectations.

On the other hand, the database layer is also a first target to modernize or replace due to escalating licensing and maintenance costs. It would feel great to decompose a monolithic database into smaller, cloud-native data stores using faster, cheaper open source-based alternatives or cloud-based data layer services. But unfortunately, that’s putting the cart before the horse. In order to break down a database effectively, you need to first decompose the business logic that uses the data services.

By decomposing and refactoring the business logic you can create microservices that eliminate cross-table database dependencies and pair new independent data stores with their relevant microservices. Likewise, it’s easier to build new micro-frontends for these independent microservices once they have been decomposed with crisp boundaries that minimize or eliminate interdependencies.

The final consideration is managing risk. Your data is gold and any changes to the data layer are super high risk. You should only change the database once, and only after you have decomposed the monolithic business logic into microservices with one data store per microservice.

Focusing on the business logic first optimizes microservice deployment to reduce dependencies and duplication. It ensures that the data layer is divided to deliver a reliable, flexible, and scalable design.

Mistake #2: Boiling the Ocean

Boiling the ocean means complicating a task to the point that it is impossible to achieve. Whether focusing on minutiae or allowing project creep, refactoring projects can quickly evolve into a mission impossible. Simplifying the steps makes it easier to control.

One common mistake in refactoring is trying to re-architect an entire application all at once. While a perfect, fully cloud-native architecture could be the long-term goal, a modernization best practice should be to select one or a small number of domains or functional areas in the monolith to refactor and move into microservices. These new services might be prioritized by their high business value, high costs, or shared platform value. Many very successful modernization projects only extract a key handful of services and leave the remaining monolith as is to

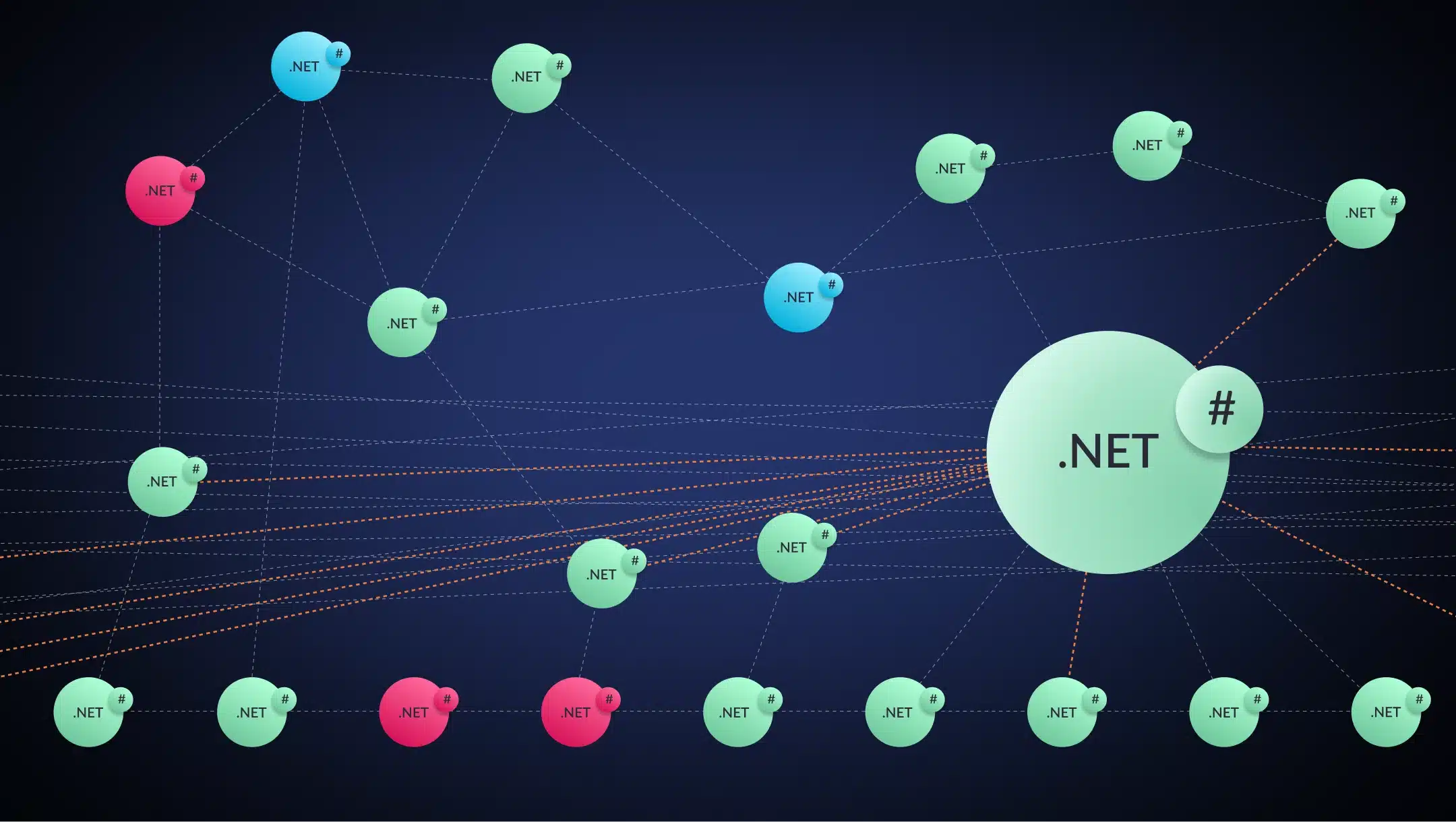

For example, instead of jumping into a more complex service-mesh topology first, take a more practical, interim step with a hub and spoke topology that centralizes traffic control, so messages coming to and from spokes go through the hub. The topology reduces misconfiguration errors and simplifies the deployment of security policies. It enables faster identification and correction of errors because of its consolidated control.

Trying to implement a full-mesh topology increases connections, complicating monitoring and troubleshooting efforts. Once comfortable with a simpler topology, then look at a service mesh. Taking a step-by-step approach prevents a mission-impossible scenario.

Mistake #3: Ignoring Common Code

Although refactoring for microservices encourages exclusive class creation, it also discourages re-inventing the wheel. If developers approach a refactoring project assuming that every class must be exclusive to a single service, they may end with an application full of duplicate code.

Related: What Are the Benefits of Microservices Architecture?

Instead, programmers should evaluate classes to determine which ones are used frequently. Putting frequently used code into shared or common libraries makes it easier to update and reduces the chances that different implementations may appear across the application.

However, common libraries can grow uncontrolled if there are no guidelines in place as to when and when not to turn a class into a shared library. Modernization platforms can detect common classes and help build rational and consistent common libraries. Intelligent modernization tooling can ensure common code is not ignored while minimizing the risk of a library monolith.

Mistake #4: Keeping Dead Code Alive

Unreachable dead code can be commonly detected by a variety of source code analysis techniques. The more dangerous form of dead code is code that is still reachable but is no longer used in production. This can be caused by functions that become obsolete, get replaced, or merely forgotten as new services are added. Using static and dynamic analysis, developers can identify reachable dead code or “zombie code” based on observability tooling that compares actual production and user access to static application structure.

This type of dead code exists because many coders are afraid to touch old code as they are unsure of what it does or what it was intended to do. Rather than risk disrupting the unknown, they let it continue. This is just another example of technical debt that piles up over time.

Mistake #5: Guessing on Exclusivity

Moving toward a microservice architecture means ensuring that application entities such as classes, beans, sockets, or transactions appear in only one microservice. In other words, every microservice performs a single function with clearly defined boundaries.

The decoupling of functionality allows developers to build, deploy, and scale applications independently. The concept enables faster deployments with lower risk than older monolithic applications. However, determining the level of exclusivity can be challenging.

Intelligent modernization tooling can analyze complex interdependencies and help design microservices that maximize exclusivity. Without automated tools, this is a long, manual, painstaking process that is not based on measurements and analytics but most often relies on trial and error.

Mistake #6. Forgetting the Architecture

Refactoring focuses on applications. How efficiently does the code accomplish its tasks? Is it meeting business requirements for agility, reliability, and resiliency? Without looking at the architecture, improvements may be limited. Static code analysis tools will help identify common code “smells,” but they ignore the architecture. And architectural technical debt is the biggest contributor to cost, slow engineering velocity, sluggish performance, and eventual application failures.

Related: What Architects Should Know About Zombie Code

System architects lack the tools needed to answer questions regarding performance and drift. Until architectural constructs can be observed, tracked, and managed, no one can assess the impact it has on refactoring. Just like applications, architecture can accumulate technical debt.

Architectural components can grow into a web of class entanglements and long dependency chains. It can exhibit unexpected behavior as it drifts away from its original design. Unfortunately, without the right tools, technical debt can be hard to identify, let alone quantify.

Mistake #7. Modernizing the Wrong Application

Assessing whether you should modernize and refactor an application in the first place is the critical first step. Is the application still important to the business? Can it be more easily replaced by a SaaS or COTS alternative? Has the business changed so dramatically that I should just rewrite it? How much technical debt is the app carrying and how hard will it be to refactor?

Assessment tools that focus on architectural technical debt can help quantify project scope in terms of time, money, and resources. When deployed appropriately, refactoring can help project managers break down an overwhelming task into smaller efforts that can be delivered quickly.

Building an Equilateral Triangle

When software development teams successfully manage the three constraints of time, quality, and resources, they create a well-balanced solution that is delivered on time and within budget, containing the requested features and functionality. They have momentarily built an equilateral triangle.

Creating an Equilateral Triangle with Automation

With AI-powered tools, refactoring projects will accelerate. Java or .NET developers can refactor their monoliths, reduce technical debt, and create a continuous modernization culture. If you’re interested in avoiding refactoring pitfalls, schedule a vFunction demo to see how we can help.